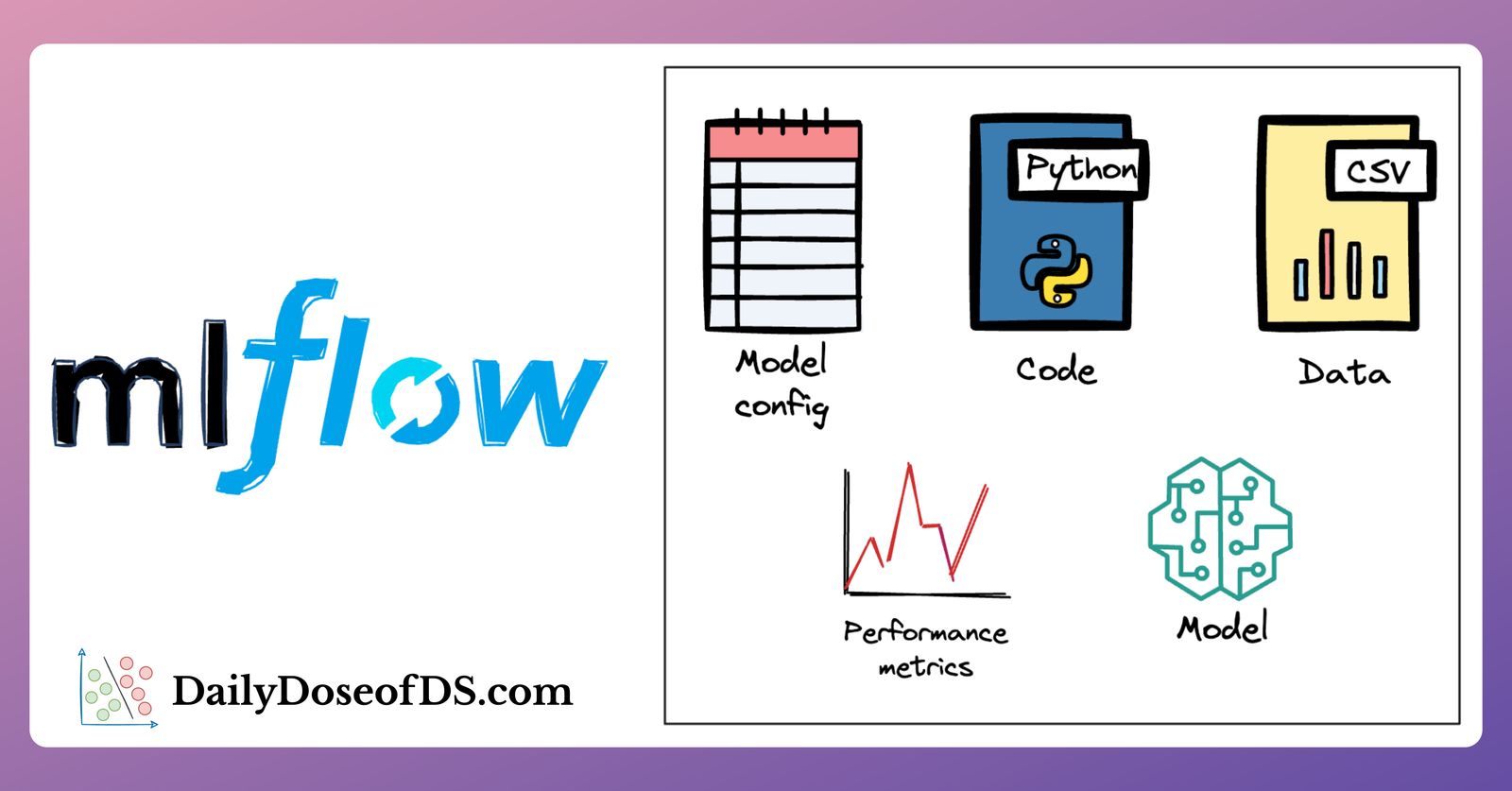

Optimize Your ML Development and Operations with MLflow

The guide that every data scientist must read to manage ML experiments like a pro.

· Avi Chawla

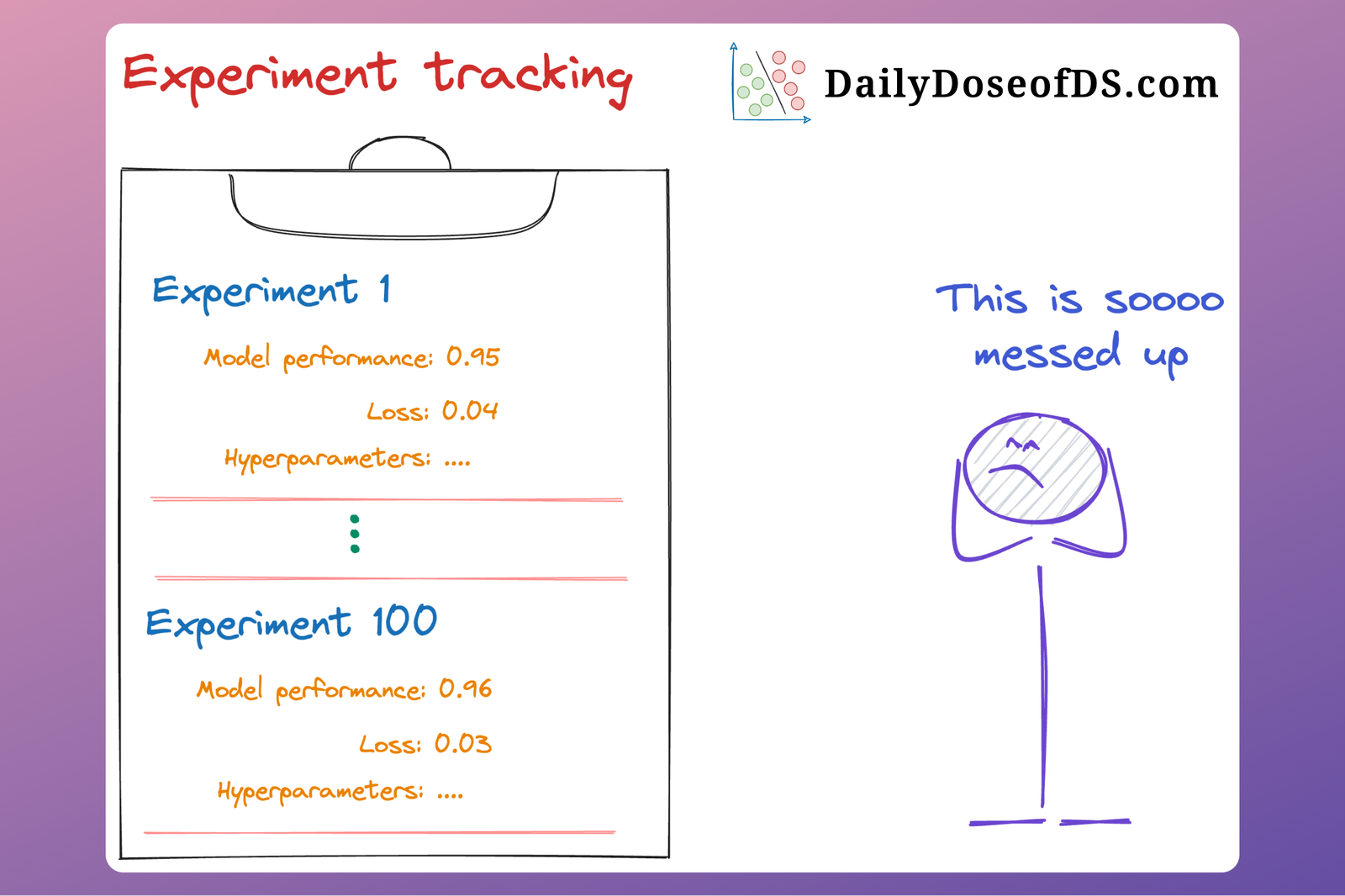

How to Streamline Your Machine Learning Workflow With DVC

The guide that every data scientist must read to manage ML experiments like a pro.

· Avi Chawla

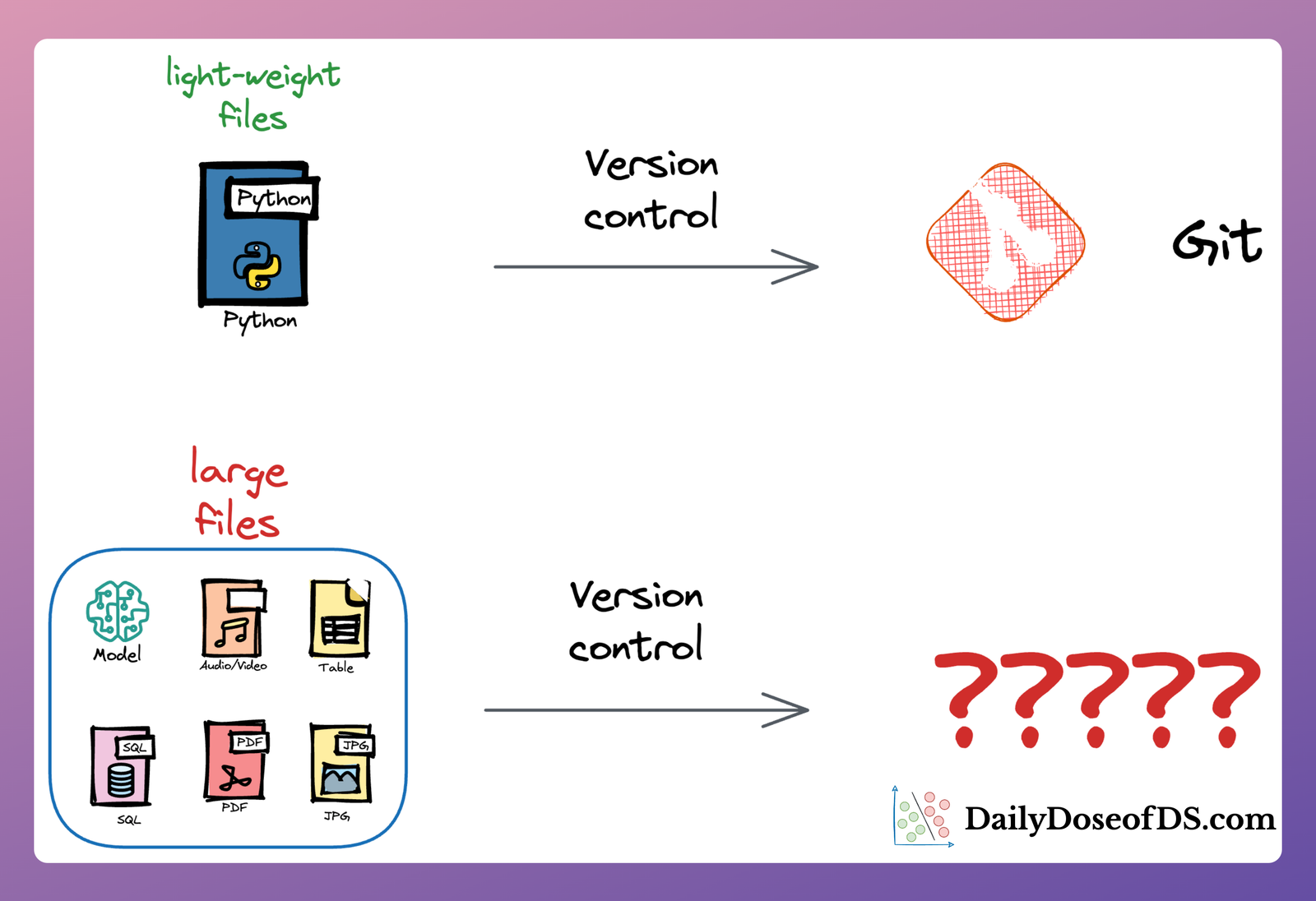

You Cannot Build Large Data Projects Until You Learn Data Version Control!

The underappreciated, yet critical, skill that most data scientists overlook.

· Avi Chawla

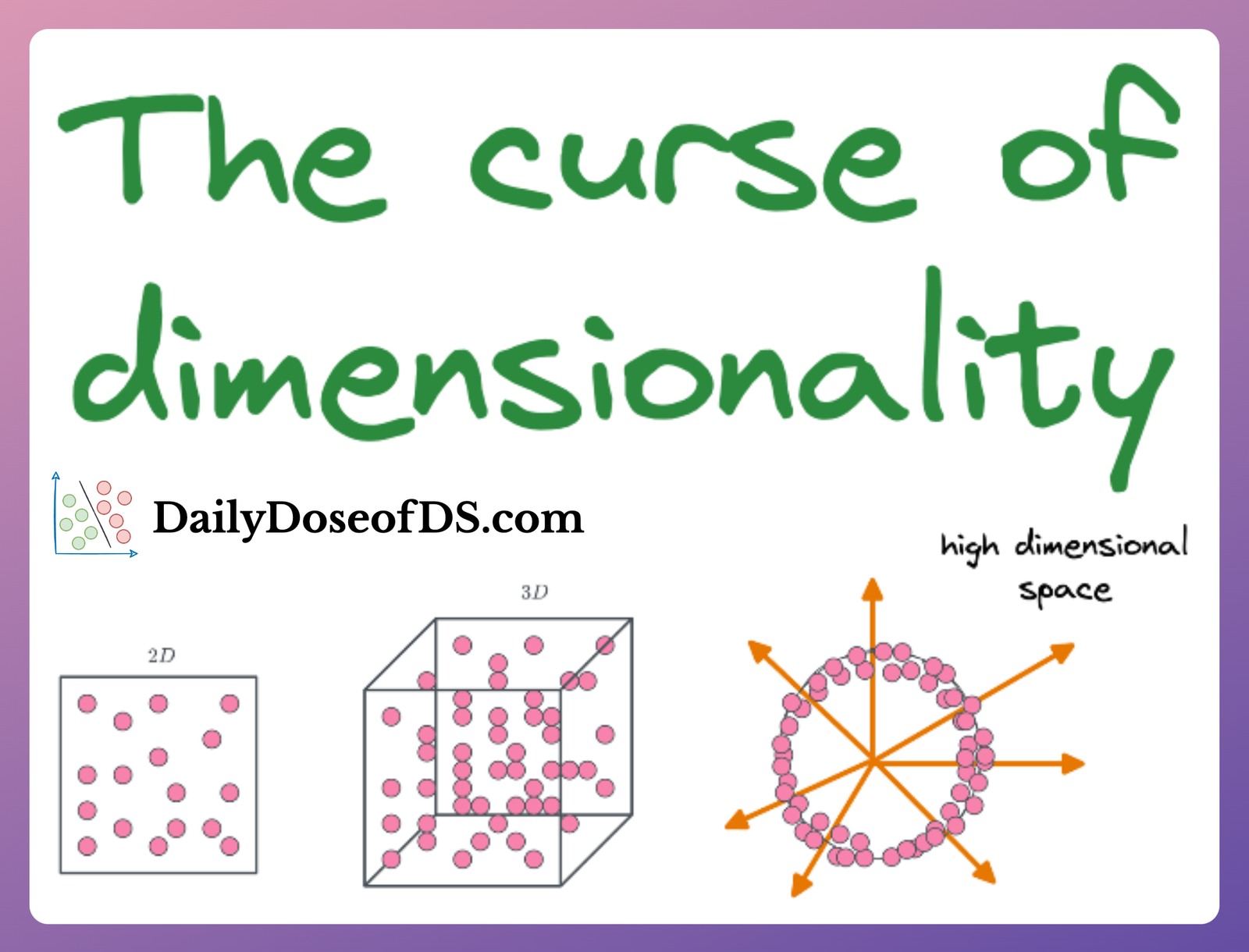

A Mathematical Deep Dive Into the Curse of Dimensionality

Mathematically understanding the surprising phenomena that arise when dealing with data in high dimensions.

· Avi Chawla

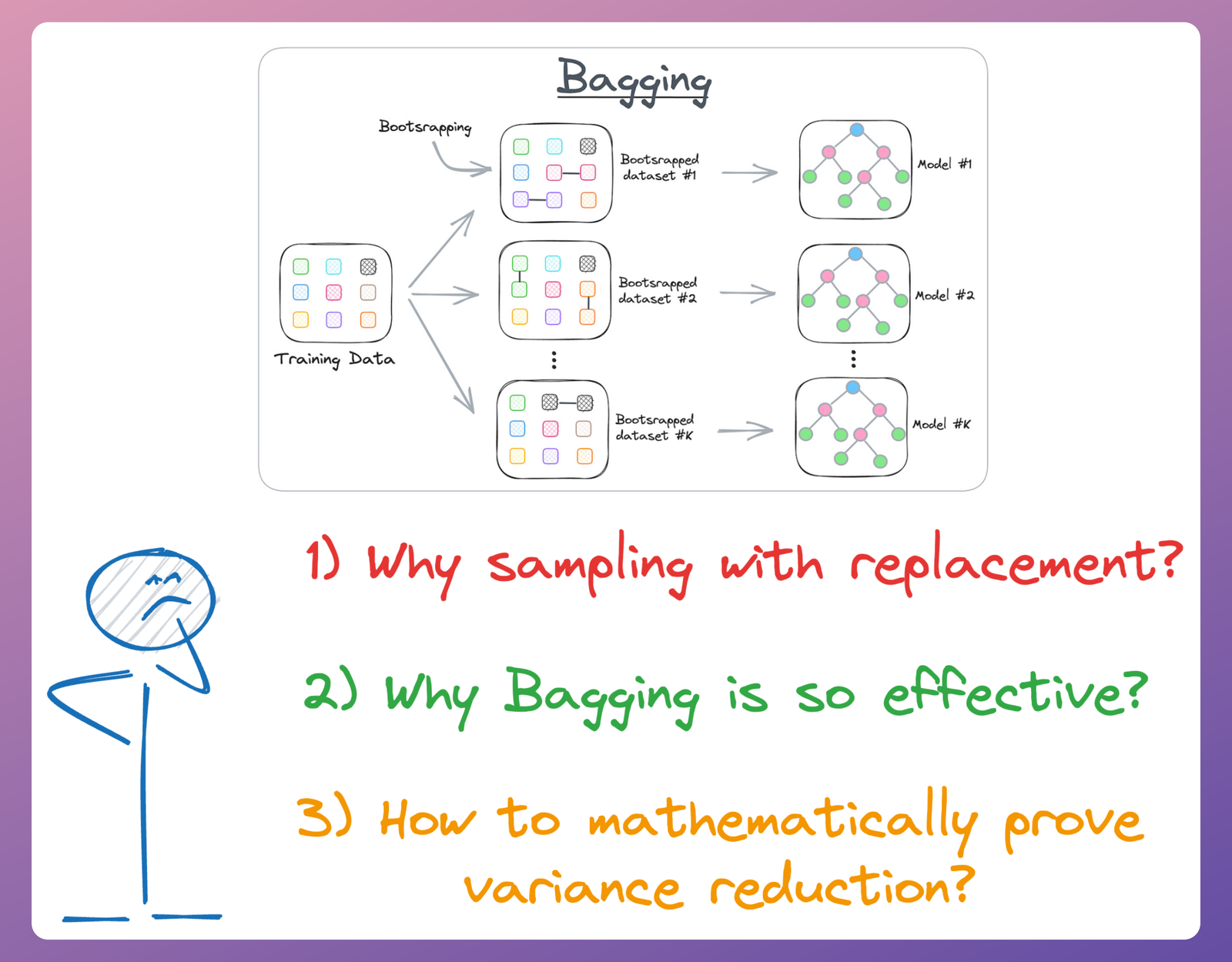

Why Bagging is So Ridiculously Effective At Variance Reduction?

Diving into the mathematical motivation for using bagging.

· Avi Chawla

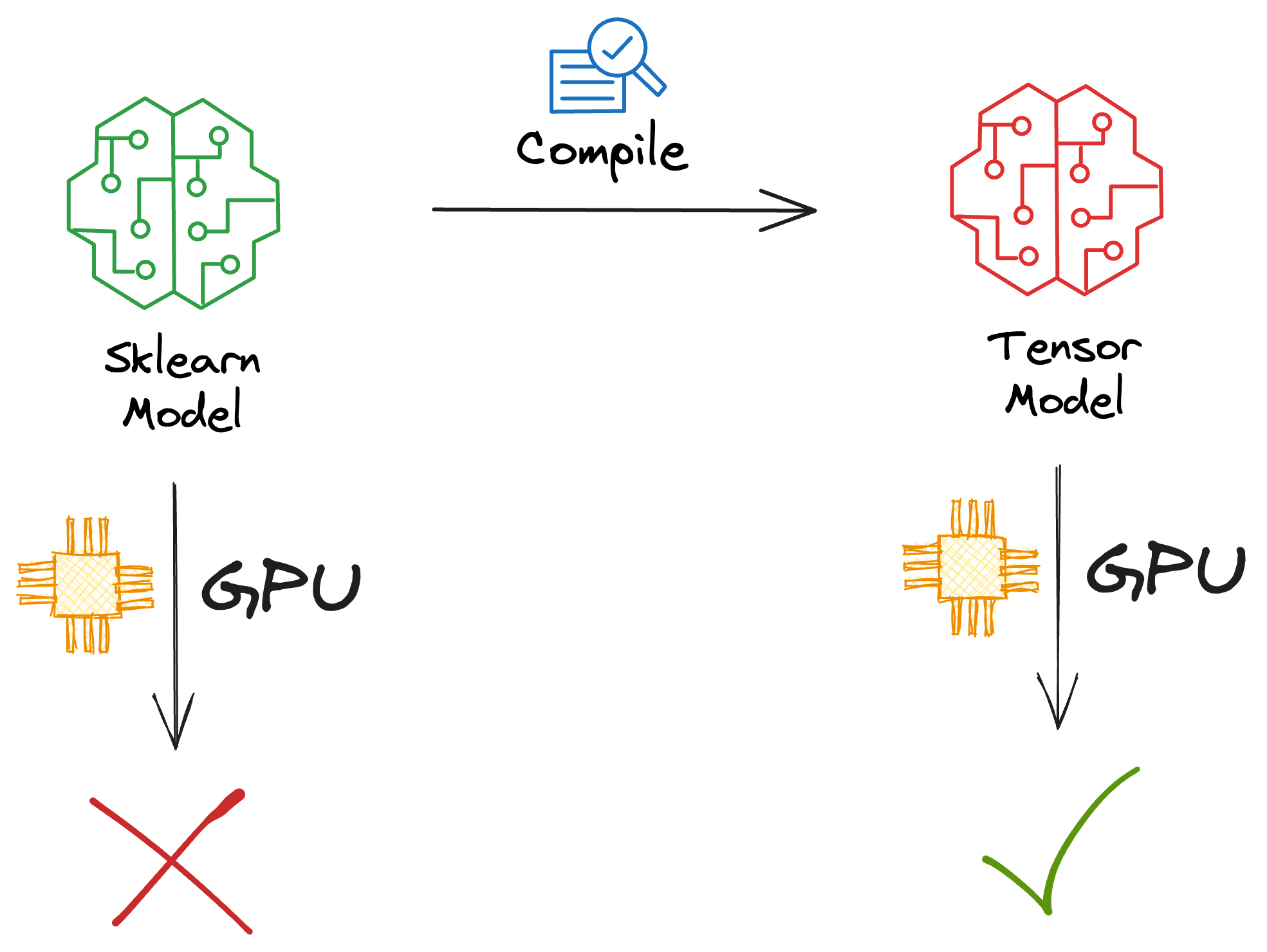

Sklearn Models are Not Deployment Friendly! Supercharge Them With Tensor Computations.

Speed up sklearn model inference up to 50x with GPU support.

· Avi Chawla

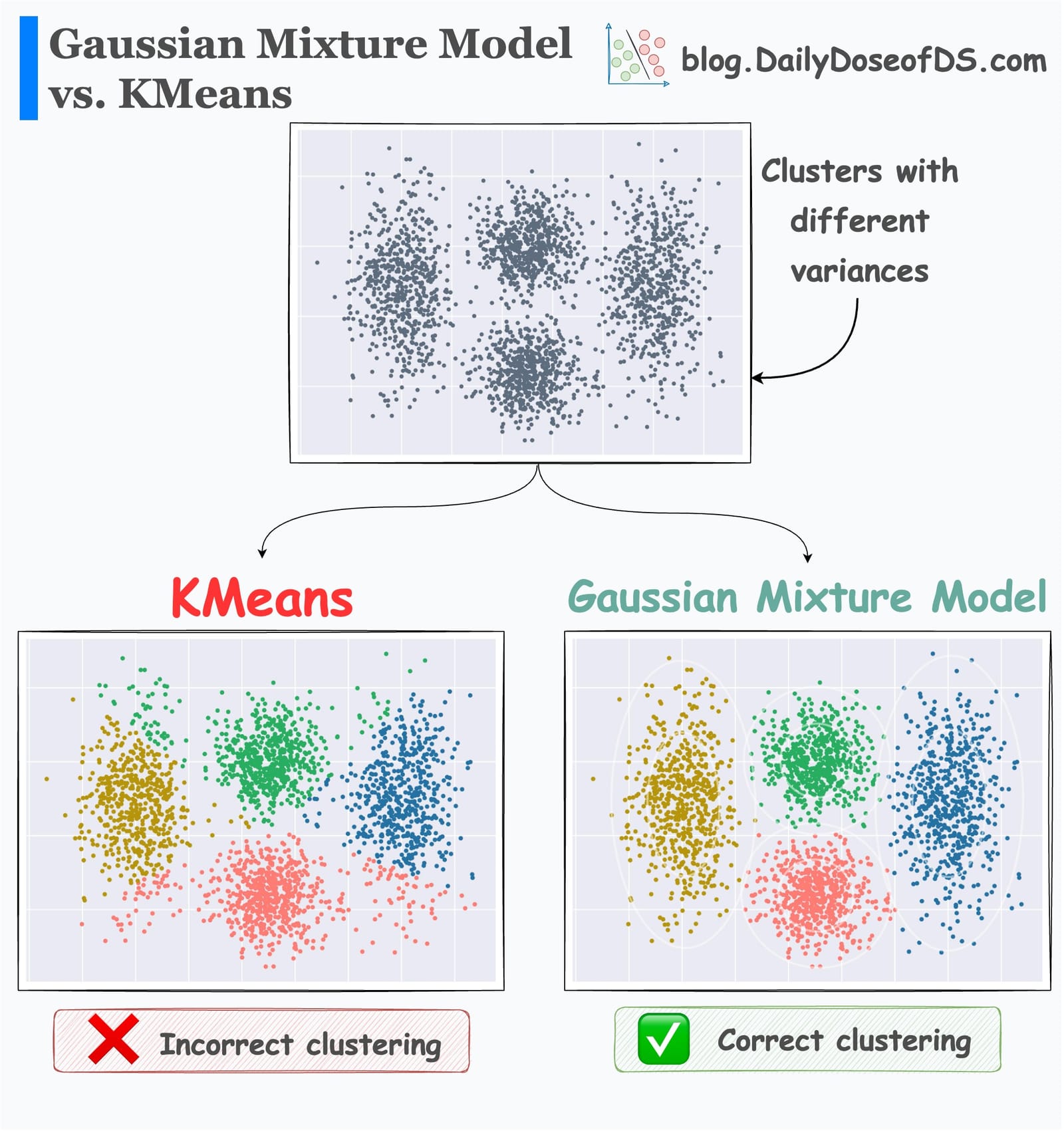

Gaussian Mixture Models (GMMs)

Gaussian Mixture Models: A more robust alternative to KMeans.

· Avi Chawla

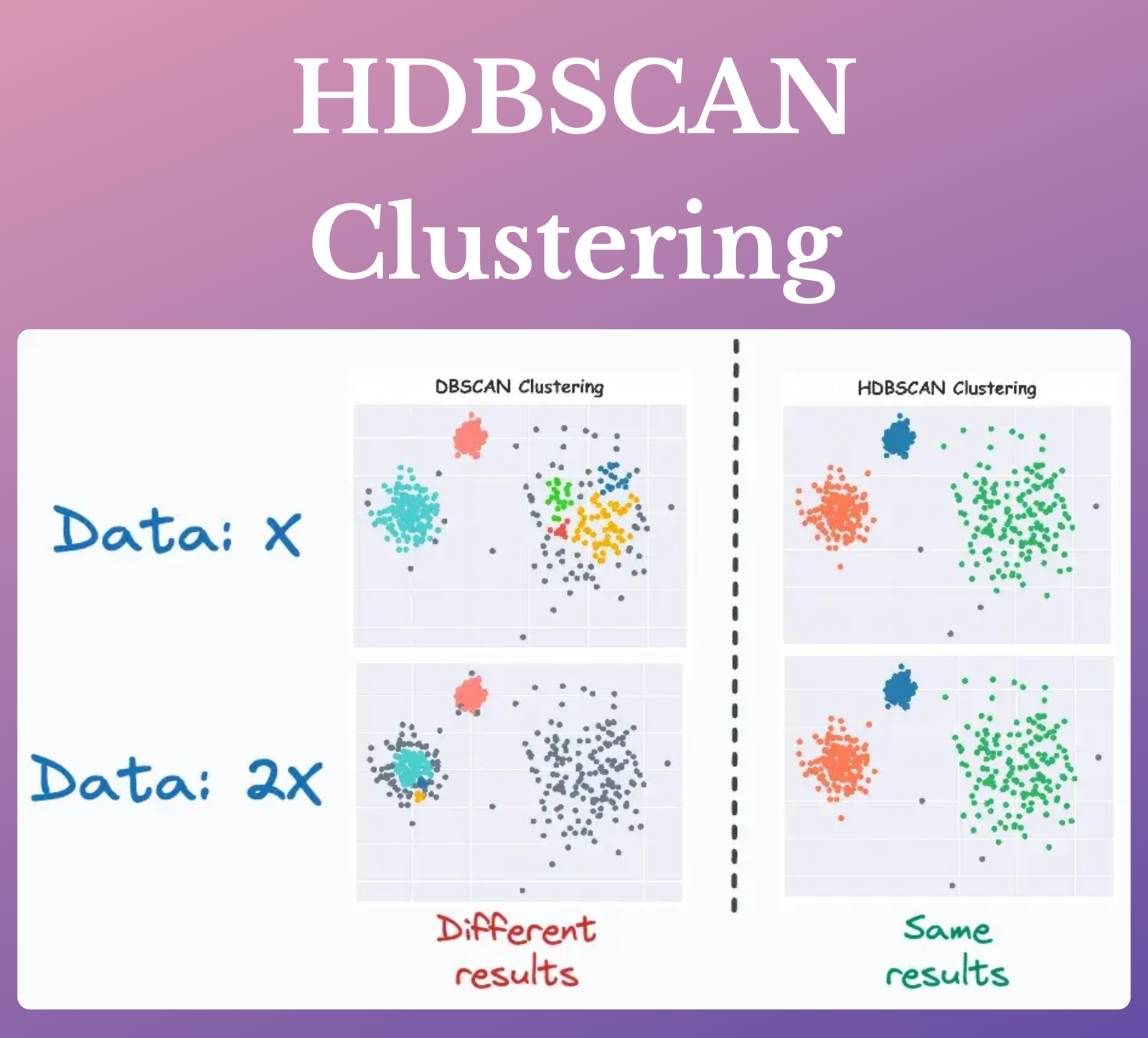

HDBSCAN: The Supercharged Version of DBSCAN (An Algorithmic Deep Dive)

A beginner-friendly introduction to HDBSCAN clustering and how it is superior to DBSCAN clustering.

· Avi Chawla

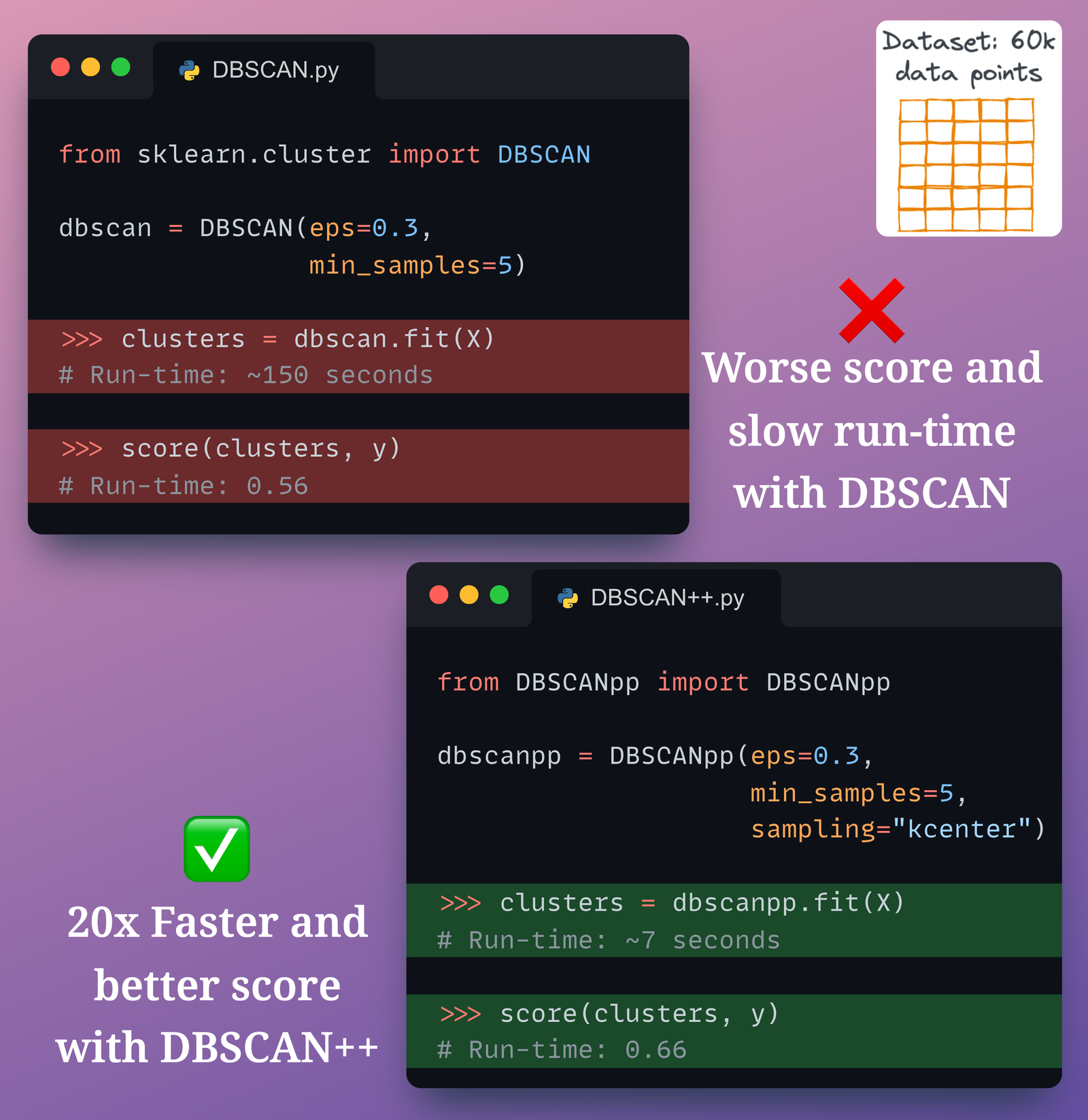

DBSCAN++: The Faster and Scalable Alternative to DBSCAN Clustering

Addressing major limitations of the most popular density-based clustering algorithm — DBSCAN.

· Avi Chawla

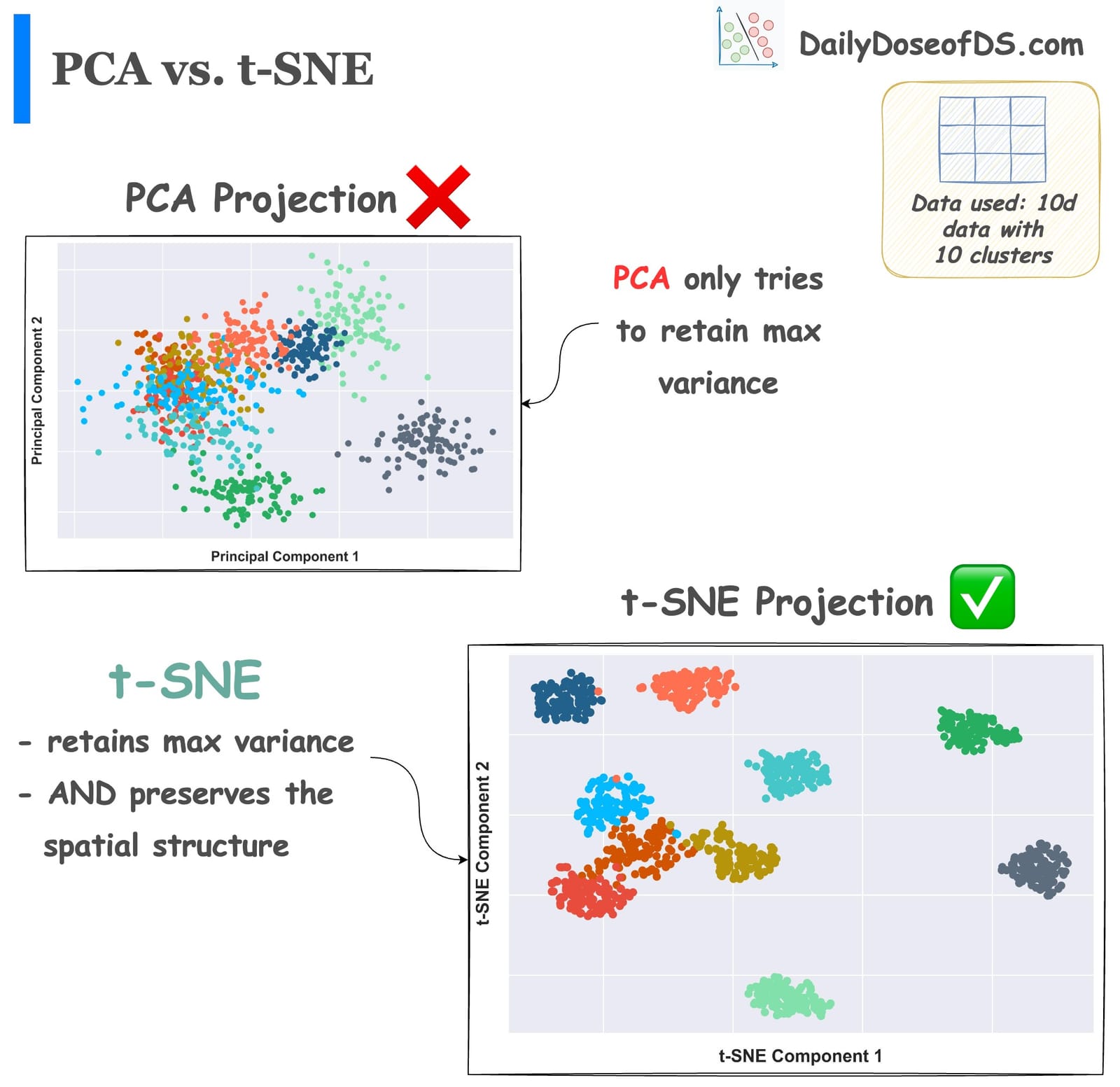

Formulating and Implementing the t-SNE Algorithm From Scratch

The most extensive visual guide to never forget how t-SNE works.

· Avi Chawla

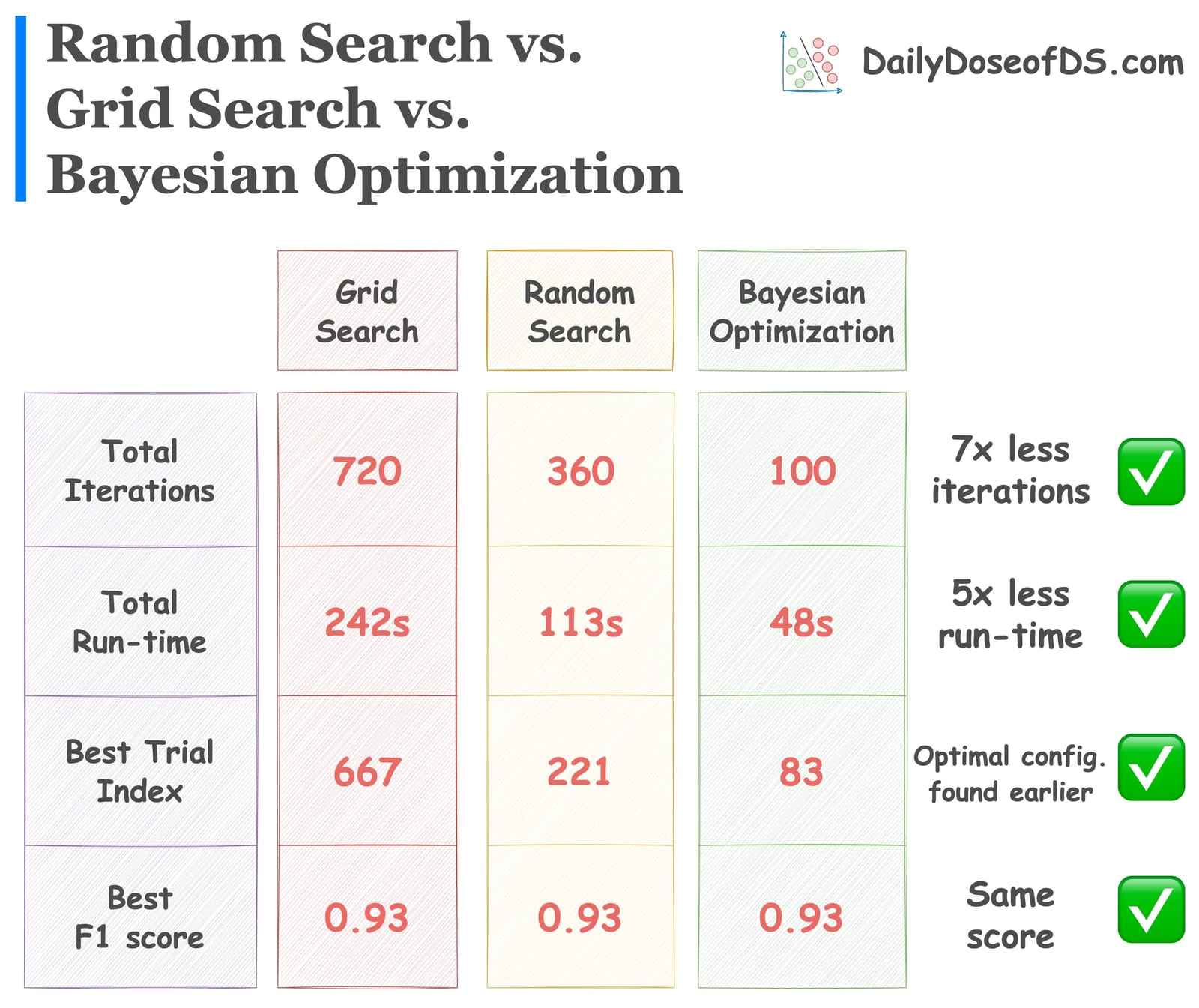

Bayesian Optimization for Hyperparameter Tuning

The caveats of grid search and random search and how Bayesian optimization addresses them.

· Avi Chawla

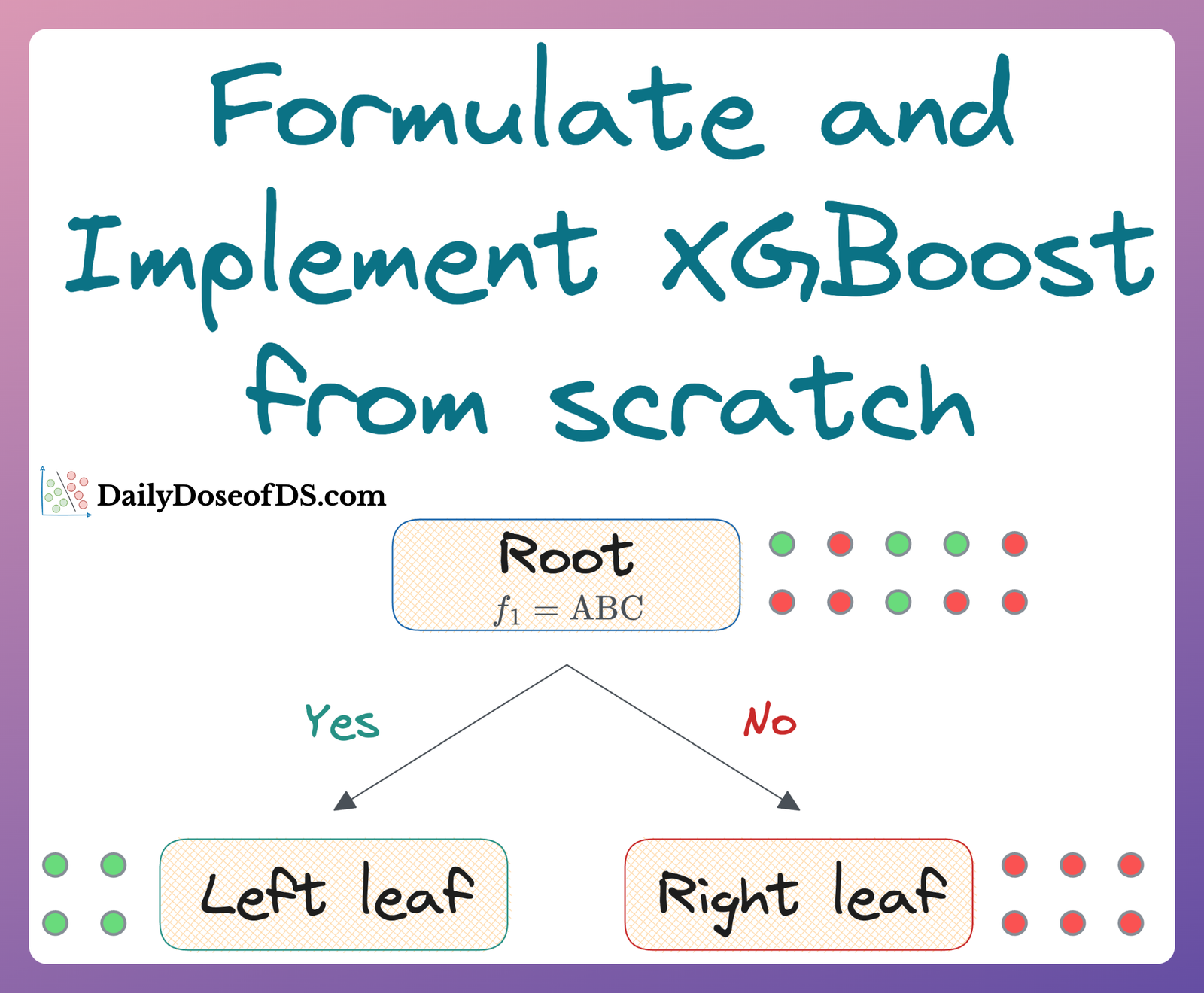

Formulating and Implementing XGBoost From Scratch

An extensive visual guide to never forget how XGBoost works.

· Avi Chawla

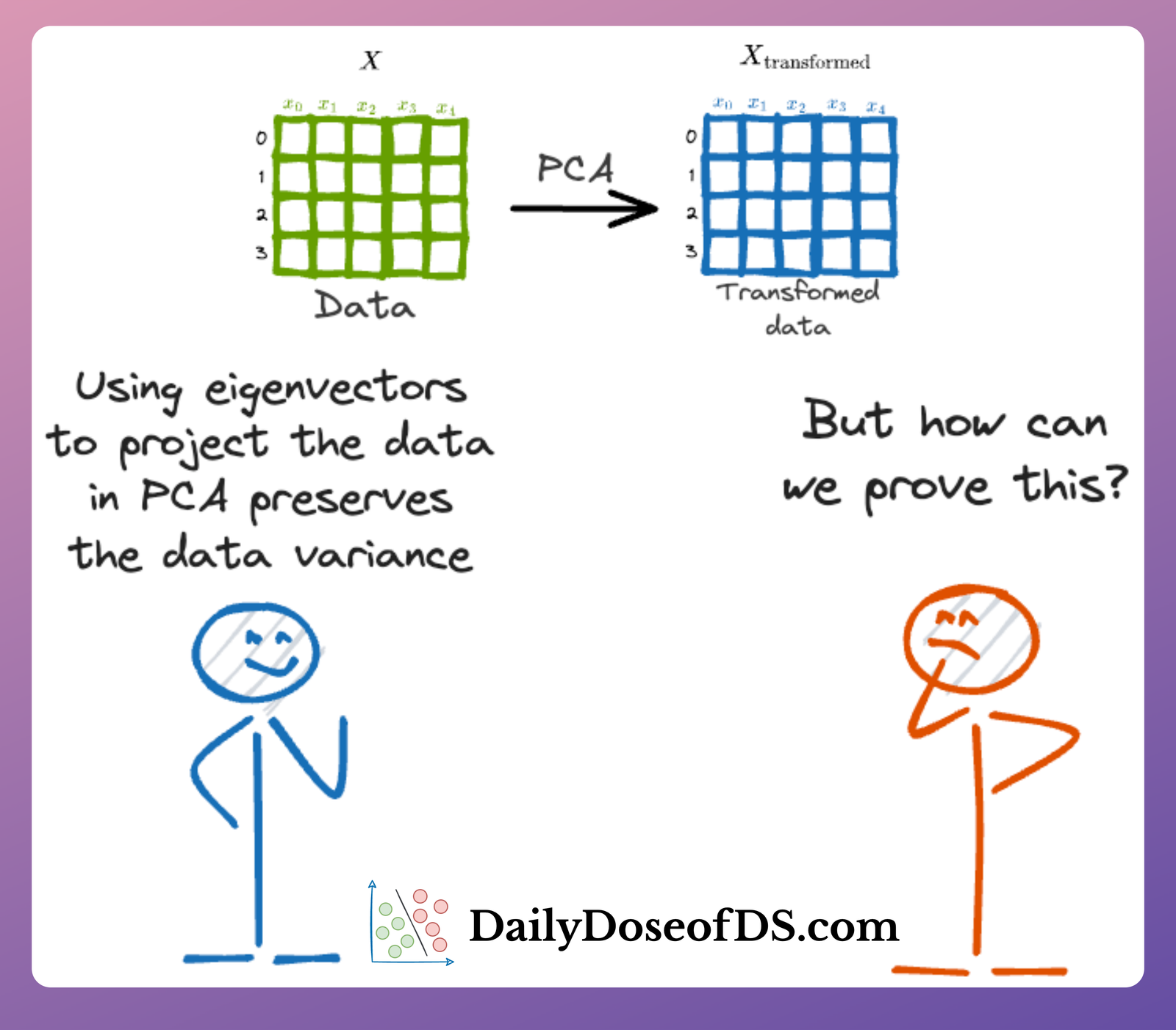

Formulating the Principal Component Analysis (PCA) Algorithm From Scratch

Approaching PCA as an optimization problem.

· Avi Chawla

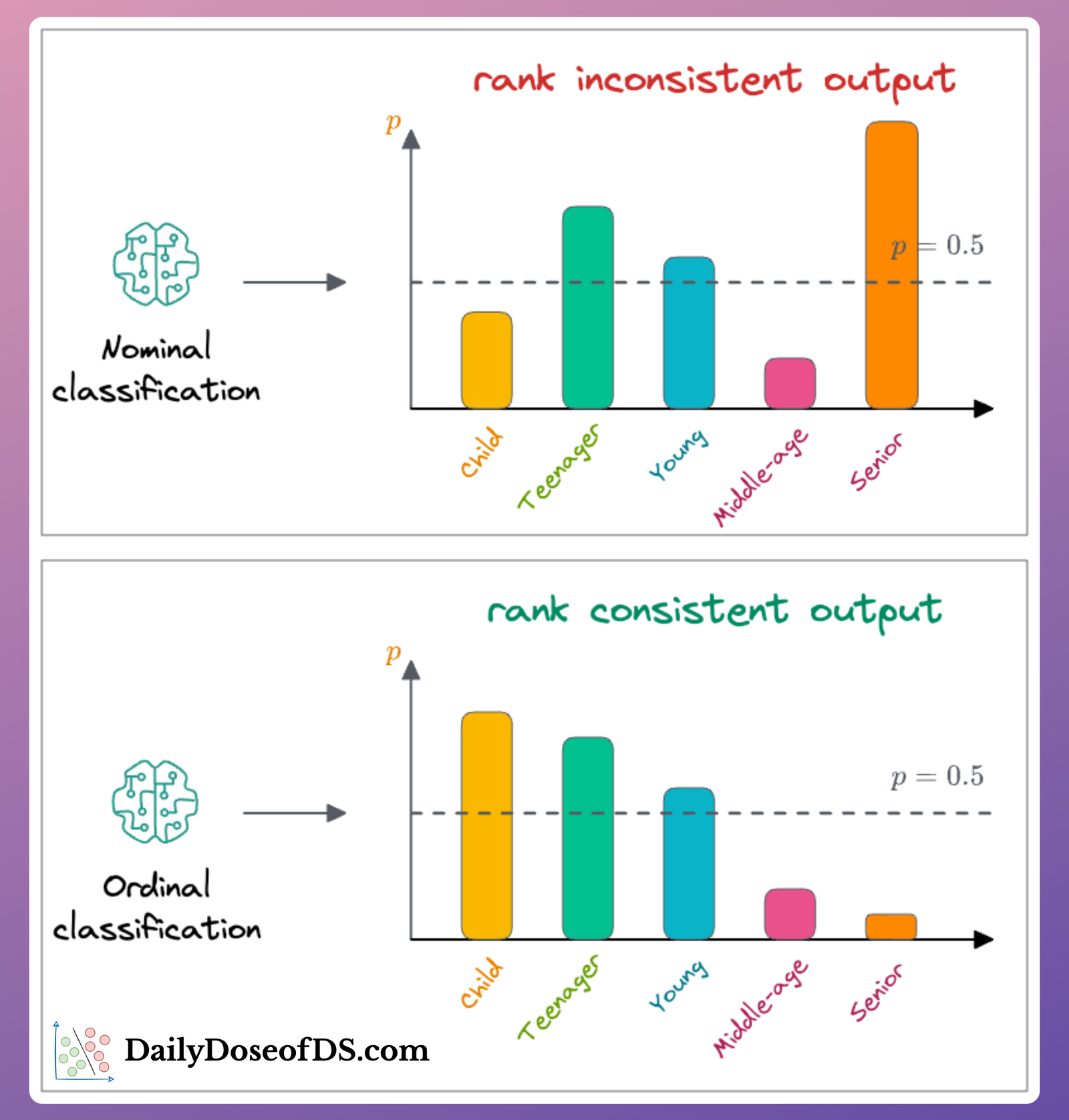

You Are Probably Building Inconsistent Classification Models Without Even Realizing

The limitations of always using cross-entropy loss in ordinal datasets.

· Avi Chawla

Why R-squared is a Flawed Regression Metric?

The lesser-known limitations of the R-squared metric.

· Avi Chawla

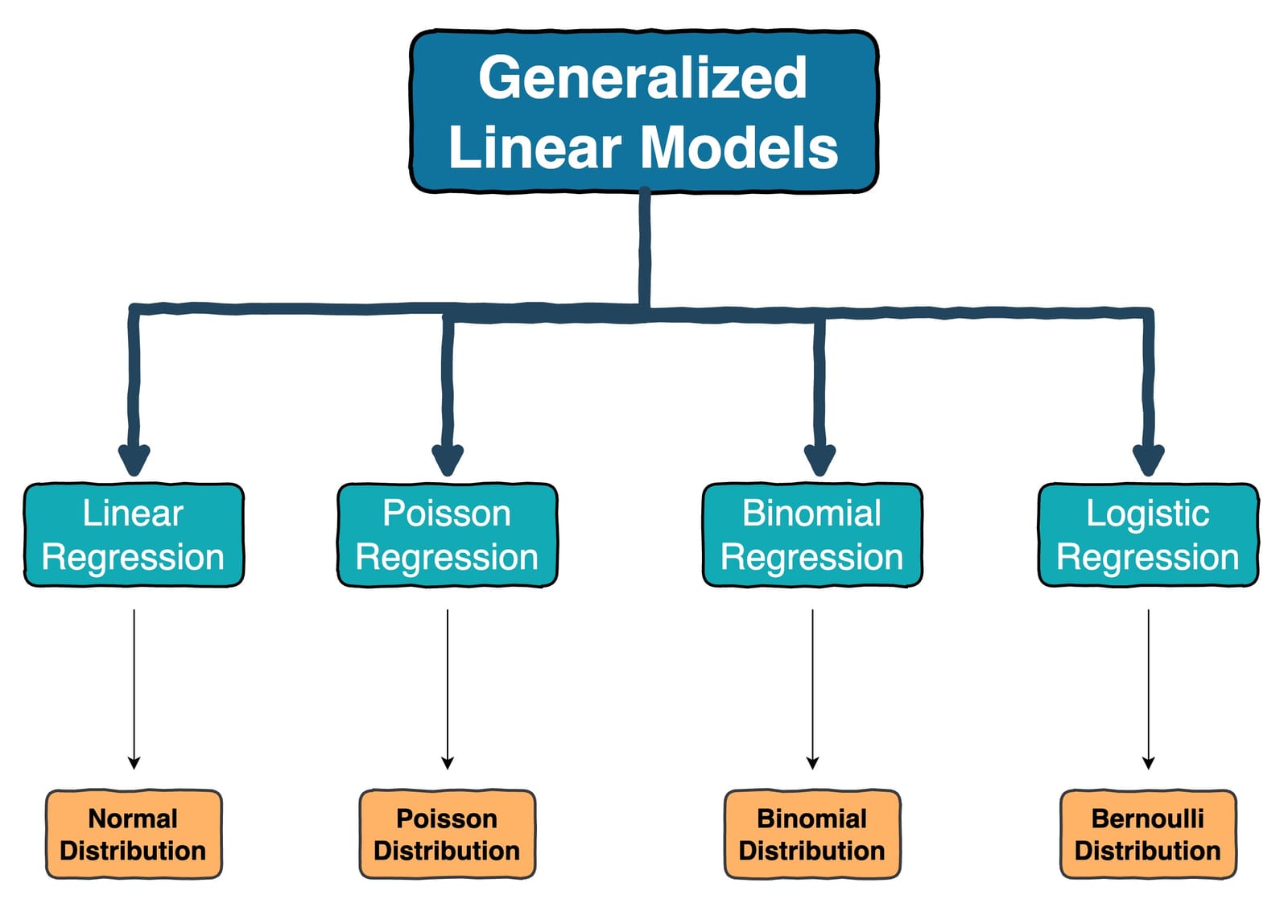

Generalized Linear Models (GLMs): The Supercharged Linear Regression

The limitations of linear regression and how GLMs solve them.

· Avi Chawla

Why Sklearn’s Logistic Regression Has no Learning Rate Hyperparameter?

What are we missing here?

· Avi Chawla

Why Do We Use log-loss To Train Logistic Regression?

The origin of log-loss.

· Avi Chawla

Why Do We Use Sigmoid in Logistic Regression?

The origin of the Sigmoid function and a guide on modeling classification datasets.

· Avi Chawla

The Probabilistic Origin of Regularization

Where did the regularization term come from?

· Avi Chawla

Where Did The Assumptions of Linear Regression Originate From?

The most extensive and in-depth guide to linear regression.

· Avi Chawla

Why is ReLU a Non-Linear Activation Function?

The most intuitive guide to ReLU activation function ever.

· Avi Chawla