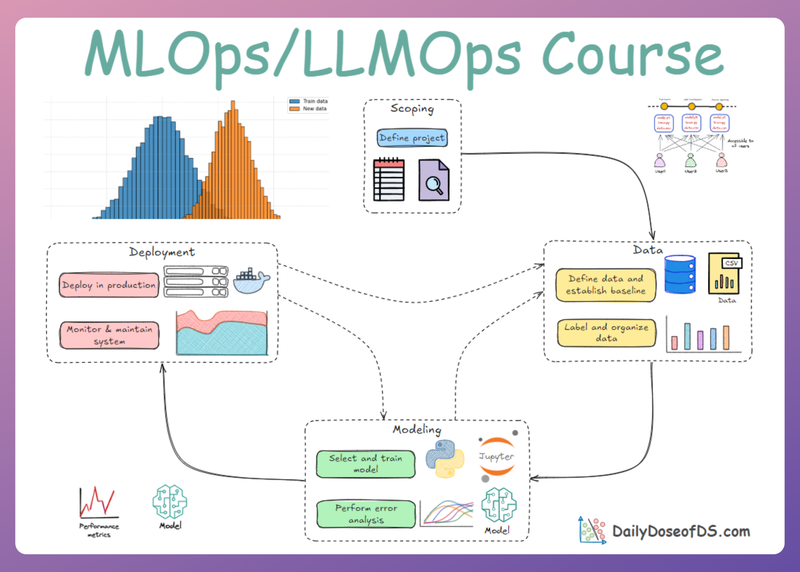

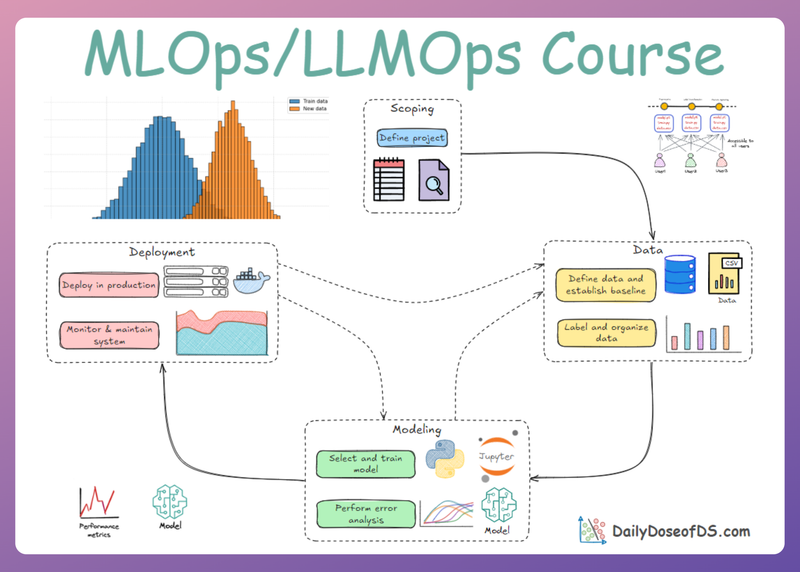

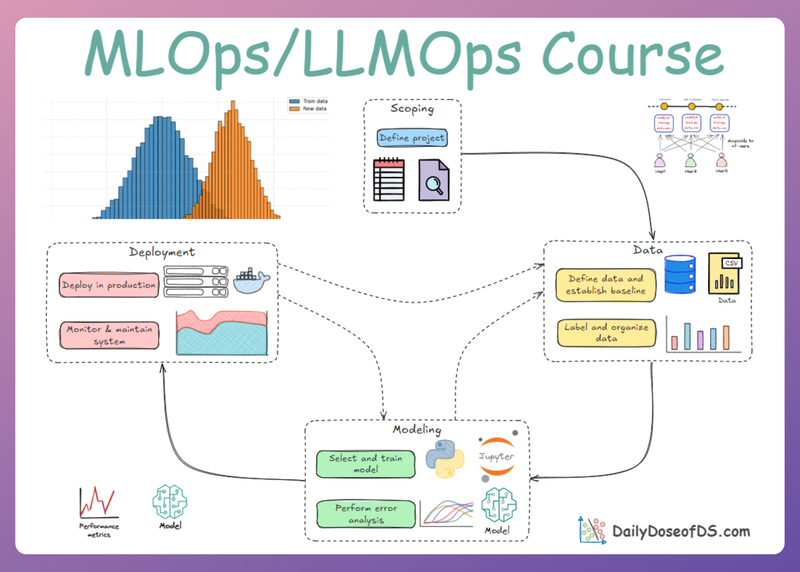

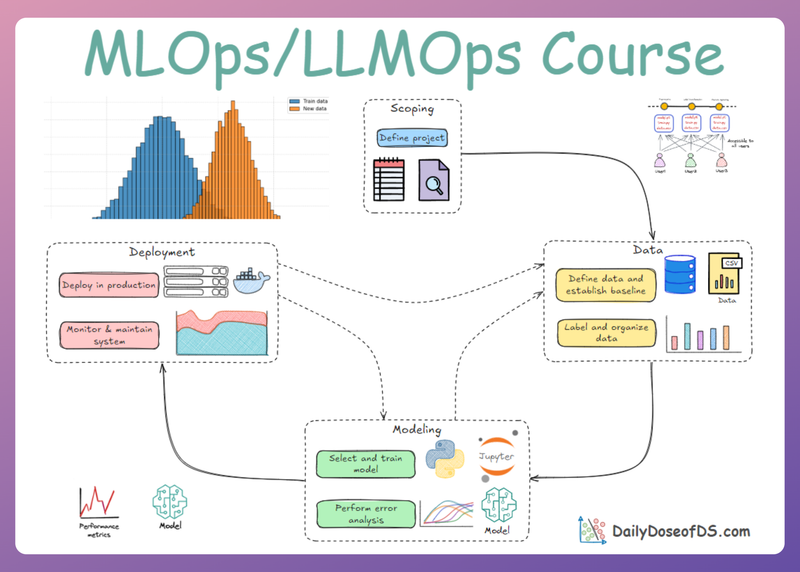

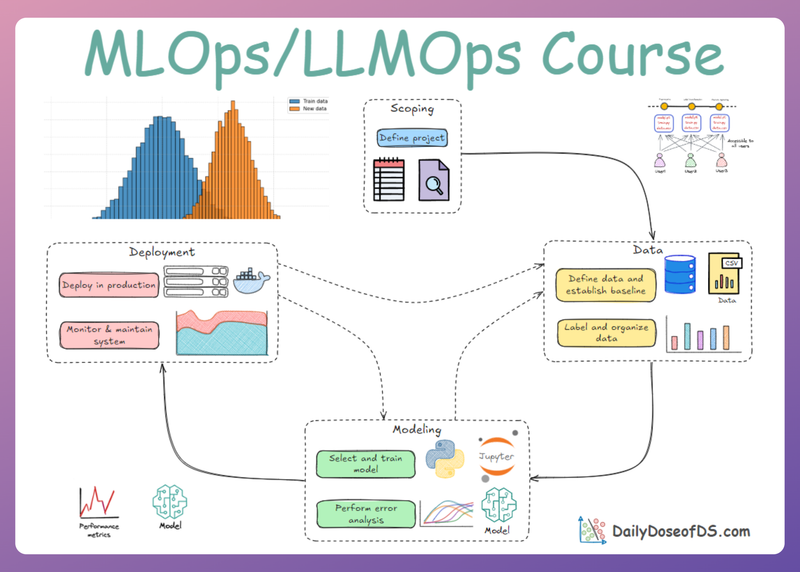

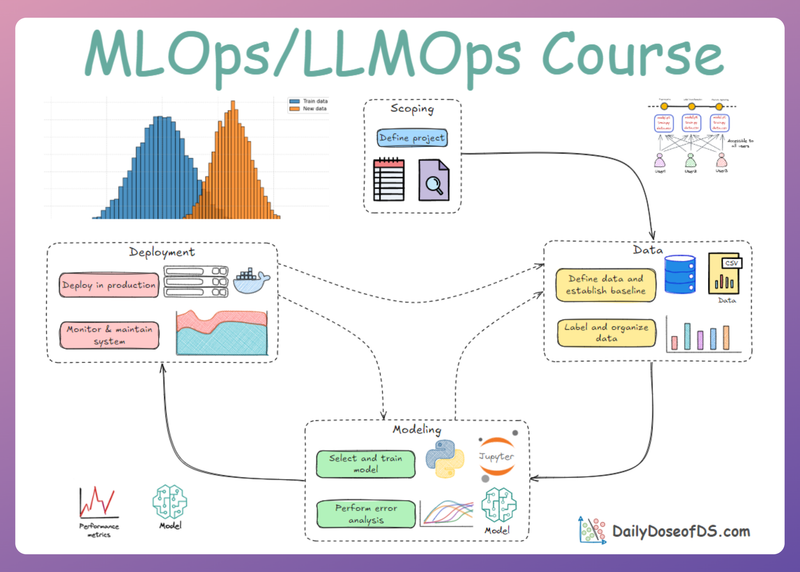

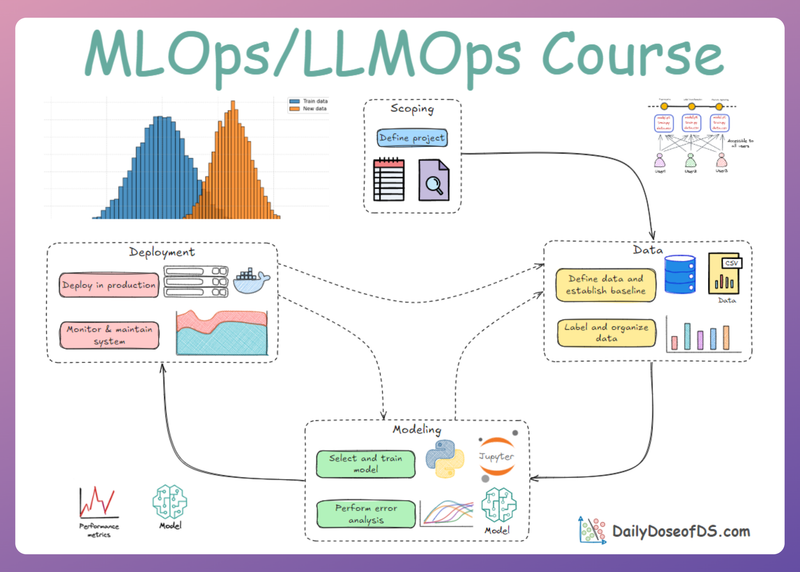

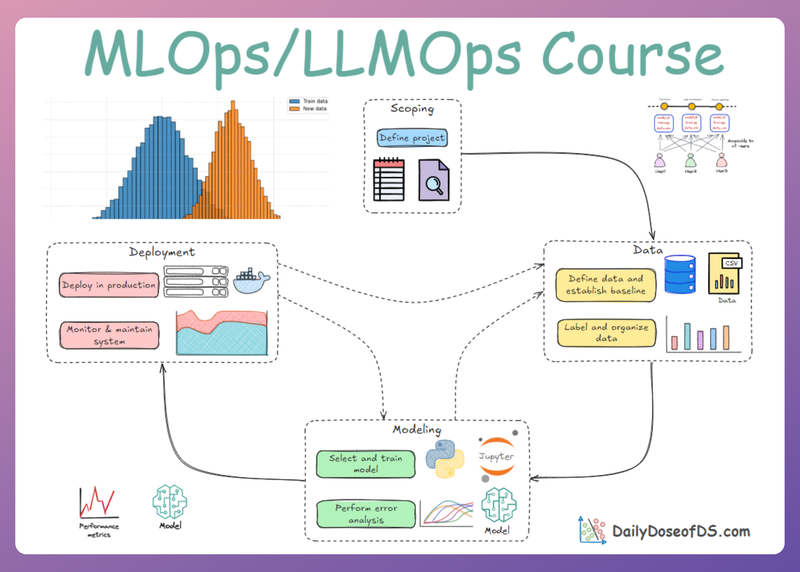

Model Development and Optimization: Compression and Portability

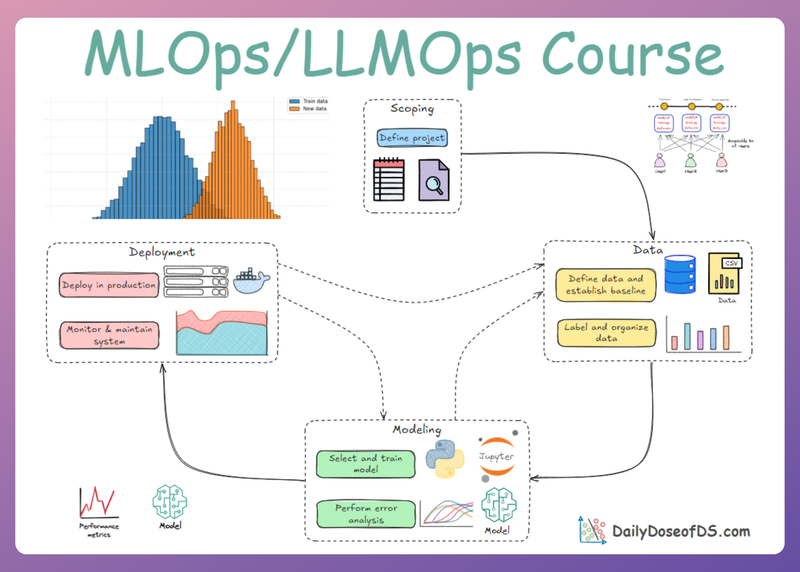

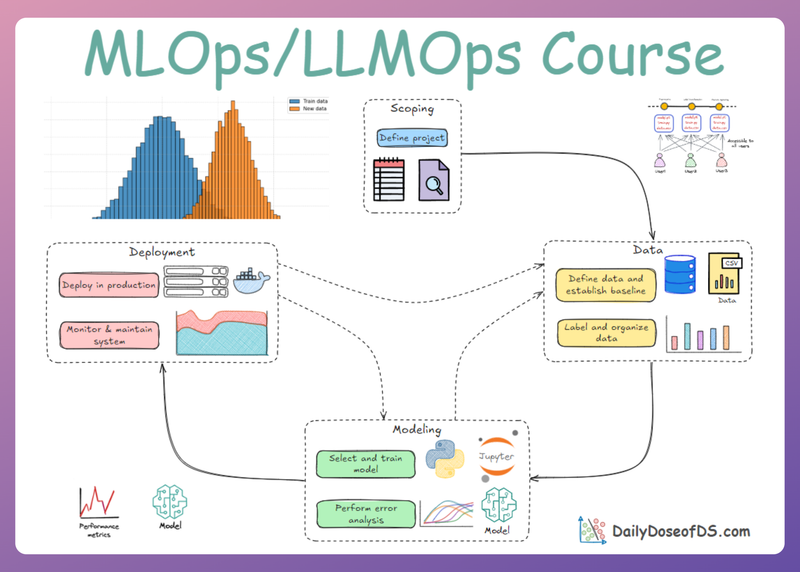

MLOps Part 10: A comprehensive guide to model compression covering knowledge distillation, low-rank factorization, and quantization, followed by ONNX and ONNX Runtime as the bridge from training frameworks to fast, portable production inference.