Accuracy Can Be Deceptive

... and how to catch it.

... and how to catch it.

TODAY'S ISSUE

If some technique improves the model, we can say it was effective:

But at times, you may be making good progress in improving the model, but “Accuracy” is not reflecting that (yet).

I have seen this when building probabilistic multiclass-classification models.

Let's understand!

On a side note, in addition to the discussion below, we covered:

In probabilistic multiclass classification models, Accuracy is determined using the highest probability output label:

Now imagine this:

In both cases, the final prediction is incorrect, which is okay.

However, going from “Version 1” to “Version 2” did improve the model.

Nonetheless, Accuracy does not consider this since it only cares about the final prediction.

If you are iteatively improving a probabilistic multiclass classification model, always use the top-k accuracy score.

It computes whether the correct label is among the top “k” labels predicted probabilities or not.

As you may have guessed, the top-1 accuracy is the traditional accuracy.

This is a better indicator for assessing model improvement efforts.

For instance, if the top-3 accuracy score increases from 75% to 90%, this tells us we are headed in the right direction:

That said, you use it to assess the model improvement efforts since true predictive power is determined using traditional accuracy.

Ideally, “Top-k Accuracy” will increase with iterations. But Accuracy can stay the same, as depicted below:

Top-k accuracy score is also available in Sklearn here.

Isn’t that a great way to assess your model improvement efforts?

If you are looking for more, we covered:

👉 Over to you: What are some other ways to assess model improvement efforts?

If you consider the last decade (or 12-13 years) in ML, neural networks have dominated the narrative in most discussions.

In contrast, tree-based methods tend to be perceived as more straightforward, and as a result, they don't always receive the same level of admiration.

However, in practice, tree-based methods frequently outperform neural networks, particularly in structured data tasks.

This is a well-known fact among Kaggle competitors, where XGBoost has become the tool of choice for top-performing submissions.

One would spend a fraction of the time they would otherwise spend on models like linear/logistic regression, SVMs, etc., to achieve the same performance as XGBoost.

Learn about its internal details by formulating and implementing it from scratch here →

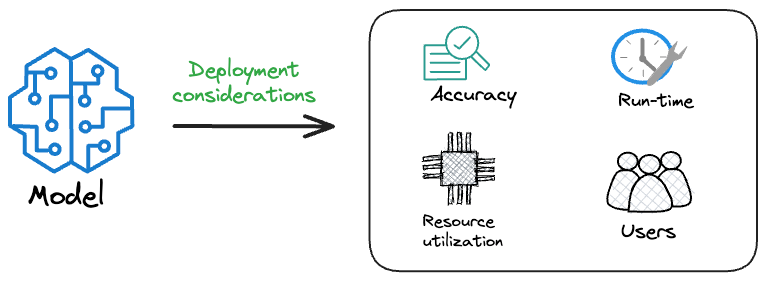

Model accuracy alone (or an equivalent performance metric) rarely determines which model will be deployed.

Much of the engineering effort goes into making the model production-friendly.

Because typically, the model that gets shipped is NEVER solely determined by performance — a misconception that many have.

Instead, we also consider several operational and feasibility metrics, such as:

For instance, consider the image below. It compares the accuracy and size of a large neural network I developed to its pruned (or reduced/compressed) version:

Looking at these results, don’t you strongly prefer deploying the model that is 72% smaller, but is still (almost) as accurate as the large model?

Of course, this depends on the task but in most cases, it might not make any sense to deploy the large model when one of its largely pruned versions performs equally well.

We discussed and implemented 6 model compression techniques in the article here, which ML teams regularly use to save 1000s of dollars in running ML models in production.

Learn how to compress models before deployment with implementation →