Claude Subagents vs. Agent Teams

...explained visually.

...explained visually.

Most people reach for multi-agent systems the moment a task feels complex.

That’s almost always the wrong instinct.

The right question isn’t “should I use multiple agents?” but rather “what kind of coordination does this task actually need?”

The answer to that determines everything about your architecture.

Claude gives you two distinct multi-agent paradigms: sub-agents and agent teams. They look similar on the surface. Architecturally, they solve completely different problems.

A sub-agent is a specialized Claude instance that runs in its own isolated context window.

Here’s the mental model: imagine you’re a research lead. You don’t read every primary source yourself. You delegate focused questions to researchers, they come back with distilled findings, and you synthesize everything into a coherent output.

That’s exactly what sub-agents do.

Each sub-agent gets:

When it finishes, only the final result returns to the parent. Not the full reasoning chain. Not the intermediate steps. Just the compressed output.

The point of sub-agents isn’t just parallelism, it’s compression. You’re distilling a vast amount of exploration into a clean signal, without polluting your parent agent’s context with noise.

One hard constraint: sub-agents can’t spawn other sub-agents, and they can’t talk to each other. Every result flows back to the parent. The parent is the sole coordinator.

This constraint is a feature, not a limitation. It keeps the system predictable. You always know where information flows and where decisions get made.

Here’s a minimal SDK example of defining and invoking sub-agents:

The description field is what tells the parent agent which sub-agent to invoke. Here, the prompt mentions “security vulnerabilities” so the parent routes to security-reviewer, not performance-optimizer. If the prompt had asked about latency or bottlenecks instead, the other agent would have been picked. The description is the routing signal. Keep it specific.

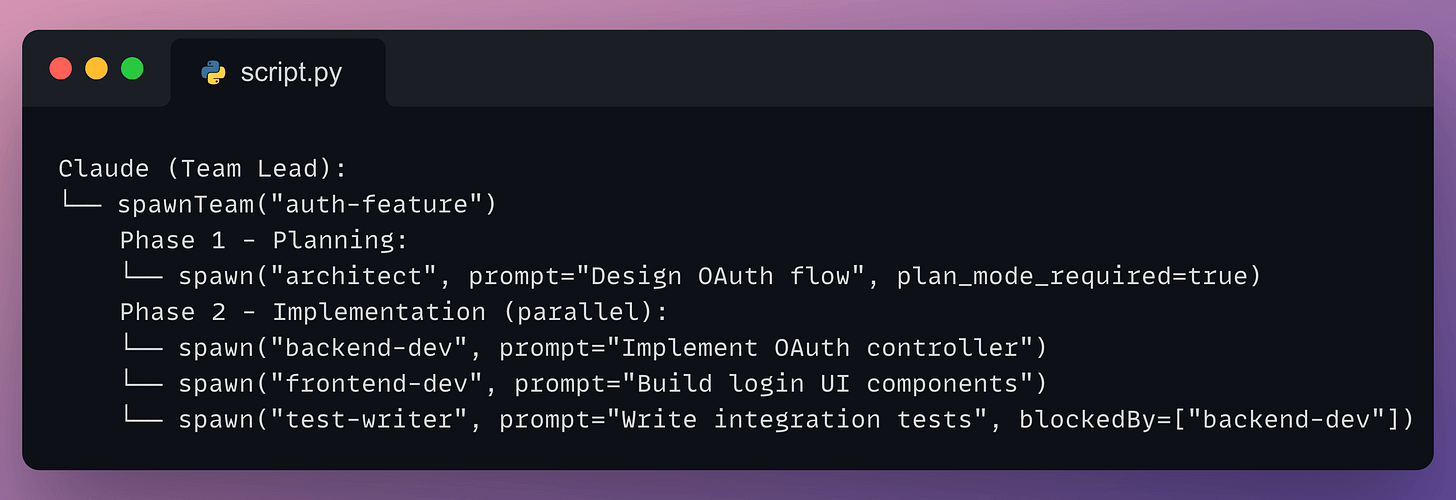

Agent teams are a fundamentally different model.

Where sub-agents are short-lived workers that complete a task and disappear, agent teams are long-running instances that persist, communicate directly with each other, and coordinate through shared state.

Think of it like the difference between hiring contractors for isolated tasks vs. assembling a team that works together in the same room.

An agent team has three moving parts:

A typical lifecycle looks like this:

Notice the blockedBy field on the test writer. That’s the shared task list doing real coordination work: the test writer won’t start until the backend agent is done, without the lead having to manually manage that sequencing.

The big difference from sub-agents is direct peer-to-peer communication. Teammates can send messages to each other, share findings, surface blockers, and negotiate without routing everything through the lead.

You can also interact with individual teammates directly. You’re not forced to go through the lead agent for everything.

Here’s how to think about the choice between them.

Sub-agents are fire-and-forget.

Agent teams are collaborative.

The clearest way to choose between them:

Most multi-agent designs fail because people split work by role instead of by context.

The intuitive instinct is to split by role: planner, implementer, tester. It feels organized. But it creates a telephone game where information degrades at every handoff.

The right mental model is context-centric decomposition.

Ask: what context does this subtask actually need? If two subtasks need deeply overlapping information, they probably belong to the same agent. If they can operate with truly isolated information and clean interfaces between them, that’s where you split.

A practical example: an agent implementing a feature should also write the tests for that feature. It already has the context. Splitting those two into separate agents creates a handoff problem that costs more than the parallelism saves.

Only separate when context can be genuinely isolated.

Regardless of which paradigm you use, these five patterns cover most real-world needs:

This is the part most articles skip.

Teams have spent months building elaborate multi-agent pipelines only to discover that better prompting on a single agent achieved equivalent results.

Start simple. Add complexity only when you can clearly measure that it’s needed.

Multi-agent systems earn their cost in three situations:

They’re the wrong call when:

One specific warning for coding: parallel agents writing code make incompatible assumptions. When you merge their work, those implicit decisions conflict in ways that are hard to debug. Sub-agents for coding should answer questions and explore, not write code simultaneously with the main agent.

Three failure modes show up constantly.

1. Vague task descriptions cause agents to duplicate each other’s work.

Every agent needs a clear objective, an expected output format, guidance on what tools or sources to use, and explicit boundaries on what it should not cover. Without this, two agents will research the same thing and neither will notice.

2. Verification agents declare victory without verifying.

Explicit, concrete instructions are non-negotiable: run the full test suite, cover these specific cases, do not mark as complete until each one passes. Vague approval criteria produce false positives.

3. Token costs compound faster than you expect.

The solution is to tier your models intelligently:

Design around context boundaries, not around roles or org charts.

Start with a single agent. Push it until you find where it breaks. That failure point tells you exactly what to add next.

Add complexity only where it solves a real, measured problem.

To learn more...

We did a crash course to help you implement reliable Agentic systems, understand the underlying challenges, and develop expertise in building Agentic apps on LLMs, which every industry cares about now.

Here’s everything we did in the crash course (with implementation):

Thanks for reading!