JSON prompting for LLMs

...explained visually.

...explained visually.

Today, let us show you exactly what JSON prompting is and how it can drastically improve your AI outputs!

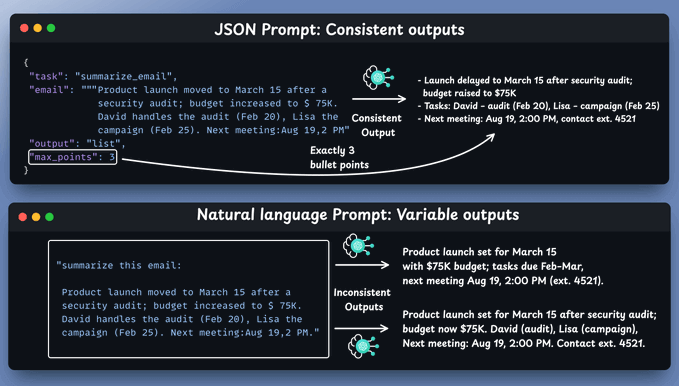

Natural language is powerful yet vague!

When you give instructions like "summarize this email" or "give me key takeaways," you leave room for interpretation, which can lead to hallucinations.

And if you try JSON prompts, you get consistent outputs:

The reason JSON is so effective is that AI models are trained on massive amounts of structured data from APIs and web applications.

When you speak their "native language," they respond with laser precision!

Let's understand this a bit more...

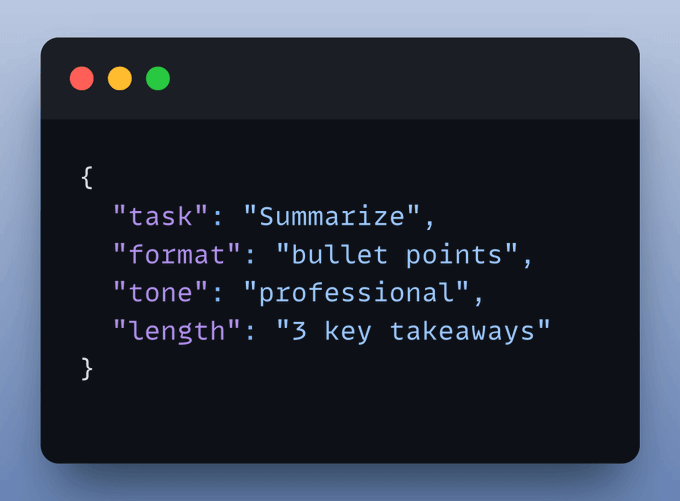

1️⃣ Structure means certainty

JSON forces you to think in terms of fields and values, which is a gift.

It eliminates gray areas and guesswork.

Here's a simple example:

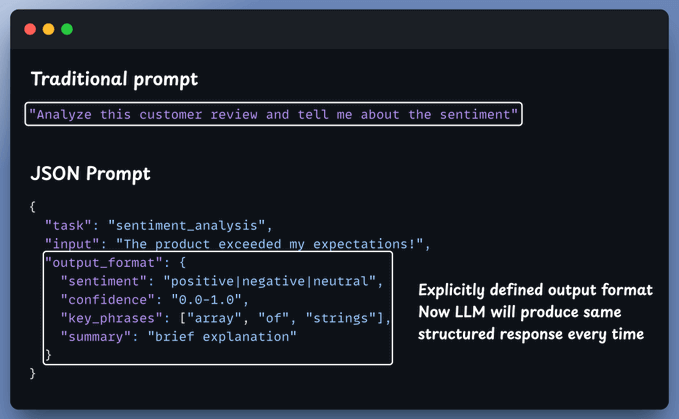

2️⃣ You control the outputs

Prompting isn't just about what you ask; it's about what you expect back.

And this works irrespective of what you are doing, like generating content, reports, or insights. JSON prompts ensure a consistent structure every time.

No more surprises, just predictable results!

3️⃣ Reusable templates → Scalability, Speed & Clean handoffs

You can turn JSON prompts into shareable templates for consistent outputs.

Teams can plug results directly into APIs, databases, and apps; no manual formatting, so work stays reliable and moves much faster.

We did something similar when we built a full-fledged MCP workflow using tools, resources, and prompts here →

So, are json prompts the best option?

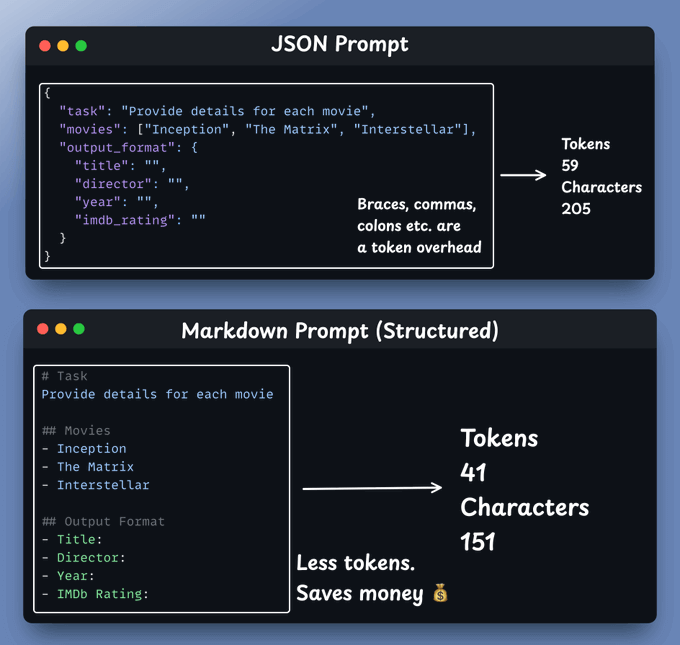

Well, good alternatives exist!

Many models excel at other formats:

So it's mainly about structure rather than syntax as depicted below:

To summarise:

Structured JSON prompting for LLMs is like writing modular code; it brings clarity of thought, makes adding new requirements effortless, & creates better communication with AI.

It's not just a technique, but rather evolving towards a habit worth developing for cleaner AI interactions.

Thanks for reading!