Build Agents That Never Forget

A first-principles walk through agent memory (open-source).

A first-principles walk through agent memory (open-source).

An LLM is stateless by design. Every API call starts fresh.

And the “memory” you feel when chatting with ChatGPT is an illusion created by re-sending the entire conversation history with every request.

That trick works for casual chat. It falls apart the moment you try to build a real agent.

Here are 7 failure modes show up the instant you skip memory:

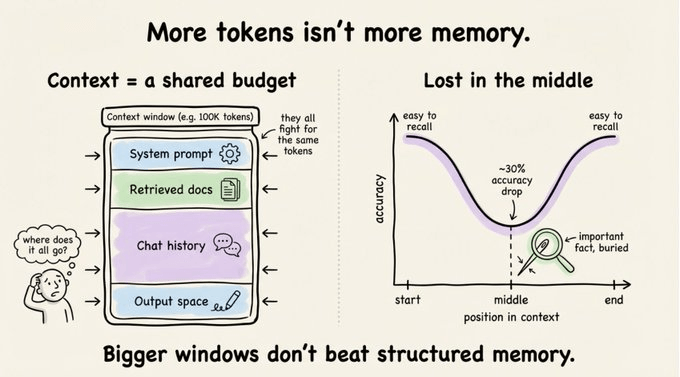

The obvious response is “throw more context at it.” That’s why 128K and 200K token windows feel like they should solve everything.

They don’t.

Accuracy drops over 30% when relevant information sits in the middle of a long context. This is the well-documented effect.

Context is a shared budget. Details like the system prompts, retrieved docs, conversation history, and output…all fight for the same tokens.

Even at 100K tokens, the absence of persistence, prioritization, and salience makes raw context length insufficient.

Memory isn’t about cramming more text into the prompt. It’s about structuring what the agent remembers so it can find what matters.

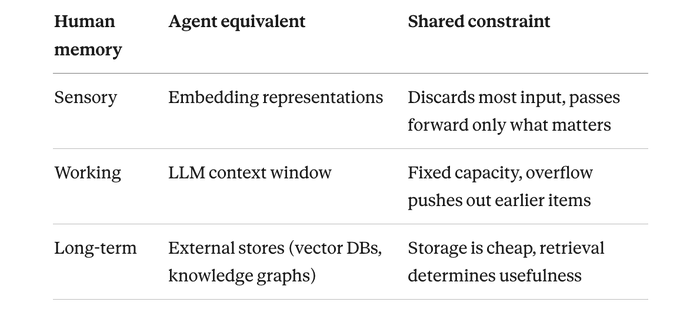

Lilian Weng’s 2023 formulation has become the default framework here.

Agent = LLM + Memory + Planning + Tool Use.

The four co-equal pillars.

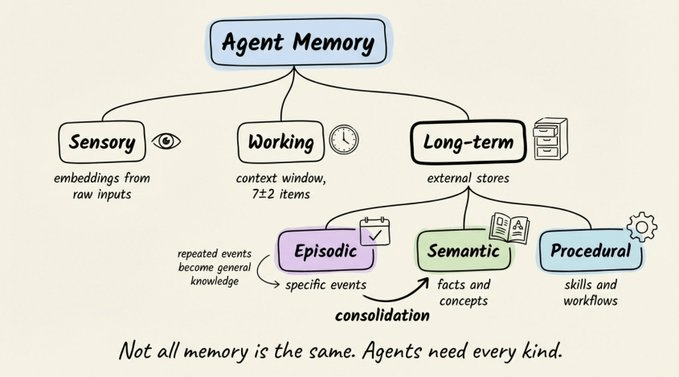

Her taxonomy borrows from cognitive science, where human memory splits into three systems:

Each maps directly to a component in modern agent architectures:

Long-term memory itself splits further:

The bridge between episodic and semantic is memory consolidation: repeated specific events distilling into general knowledge.

An agent that notices “users consistently prefer executive summaries” across dozens of interactions should turn that into a reusable rule. Without consolidation, your agent replays individual events rather than learning from them.

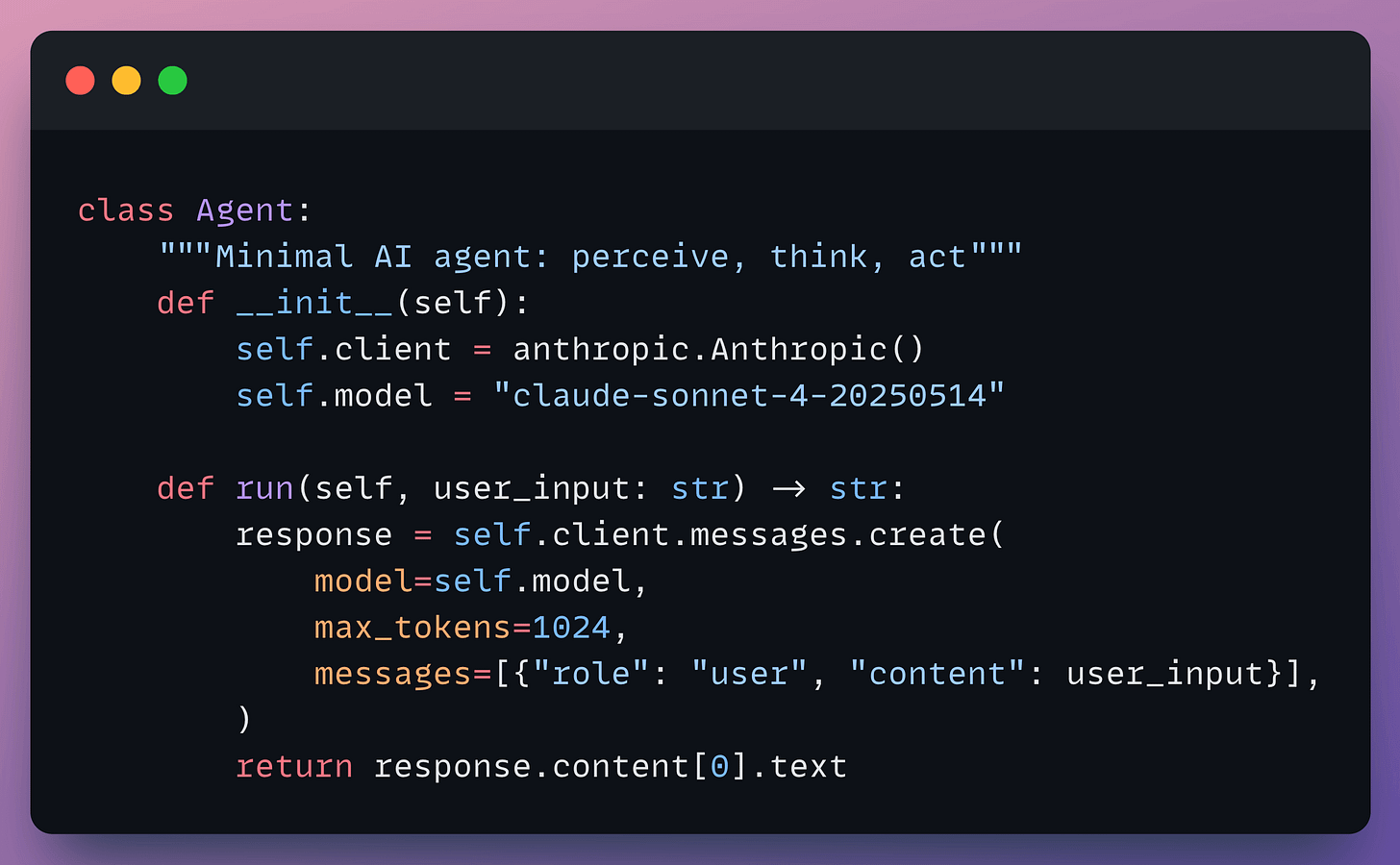

If you strip away the frameworks, an agent is a loop which goes like: perceive, think, and act.

If you tell it “I have 4 apples,” then ask “I ate one, how many left?” and it has no idea what apples you’re talking about. Each call exists in isolation.

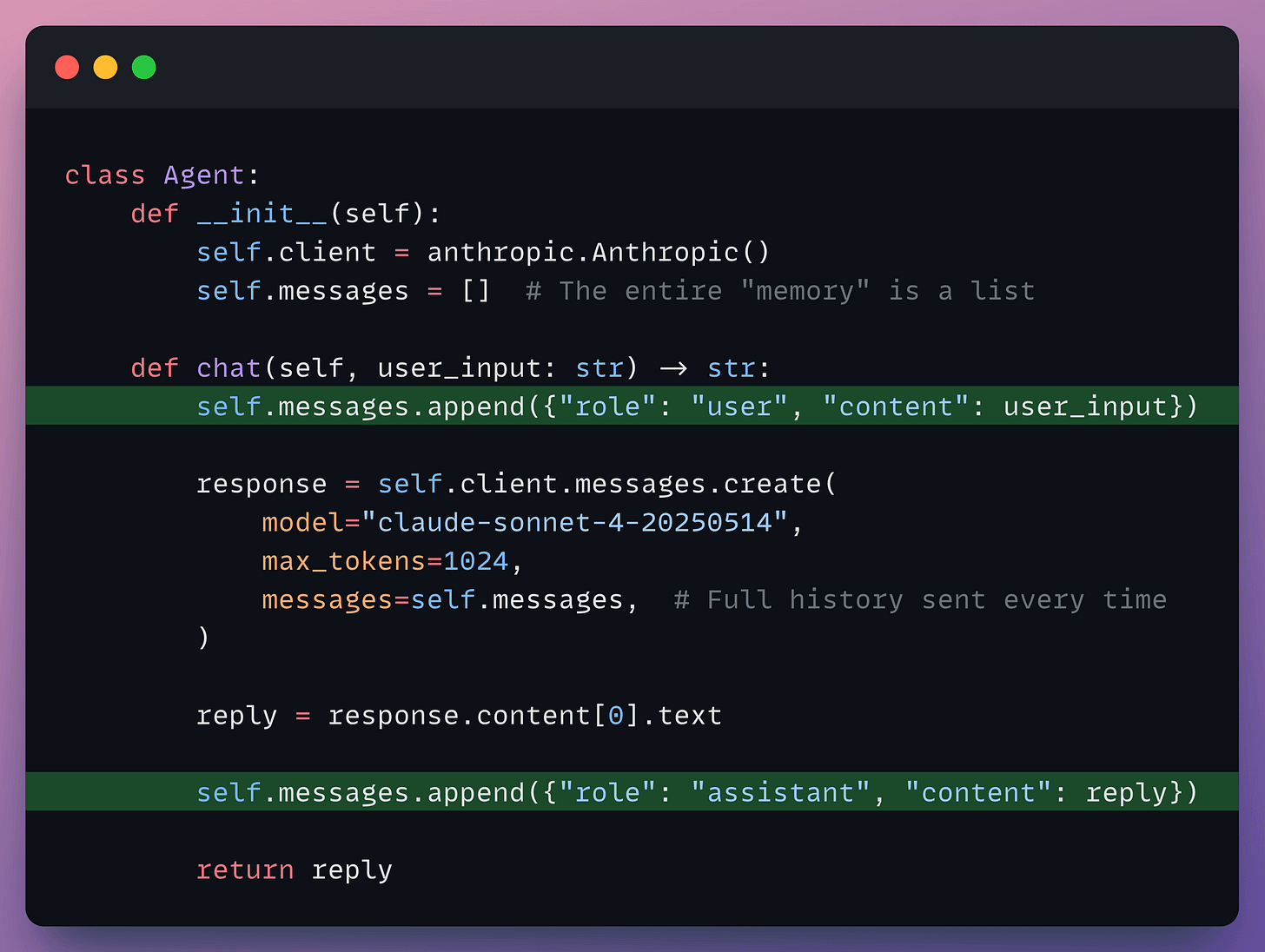

The first fix everyone reaches for is maintaining the interaction in a messages list:

Multi-turn works now. The apples question gets answered correctly because the full conversation re-ships with every call.

Two problems show up fast:

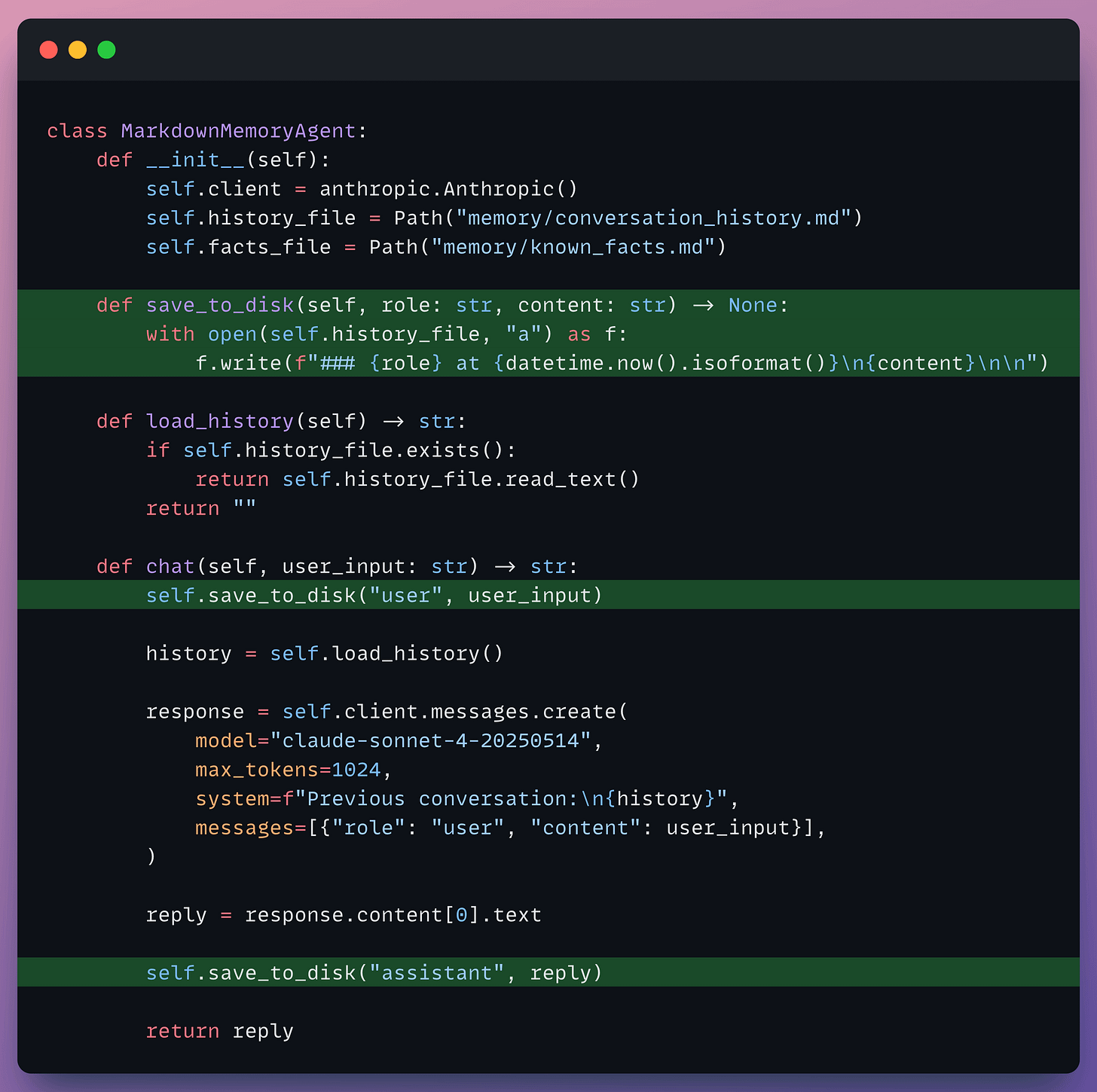

The next move is writing memory to disk.

Markdown is a natural fit since they are human-readable, Git-friendly, and the agent can read it back as plain text. Claude Code uses exactly this pattern with CLAUDE.md and MEMORY.md files:

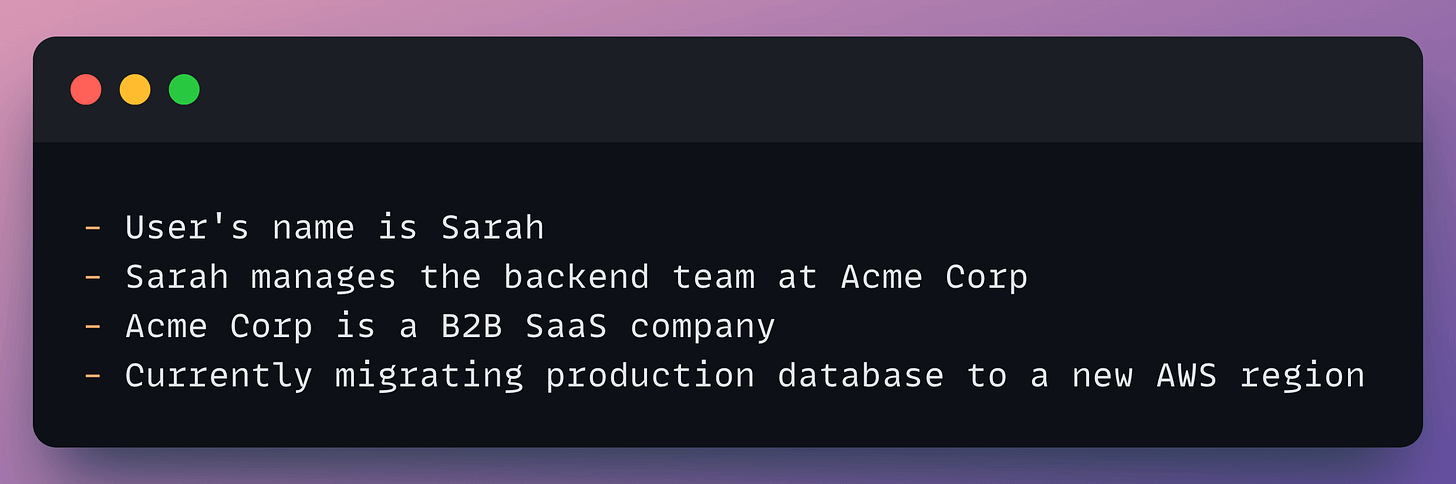

Persistence is solved because if you restart the script, and the conversation is still on disk. You could also maintain a separate facts file that the agent extracts over time:

You can open the file in any editor, see exactly what the agent knows, and fix it by hand. Genuinely useful for prototyping.

With 4 facts, this works perfectly. Load the entire file into context and the LLM handles any question about Sarah, her company, or her industry.

Now fast-forward three months. Your agent has 2,000 extracted facts and 200 conversation logs. That’s 500K+ tokens of markdown on disk, and your context window is 128K.

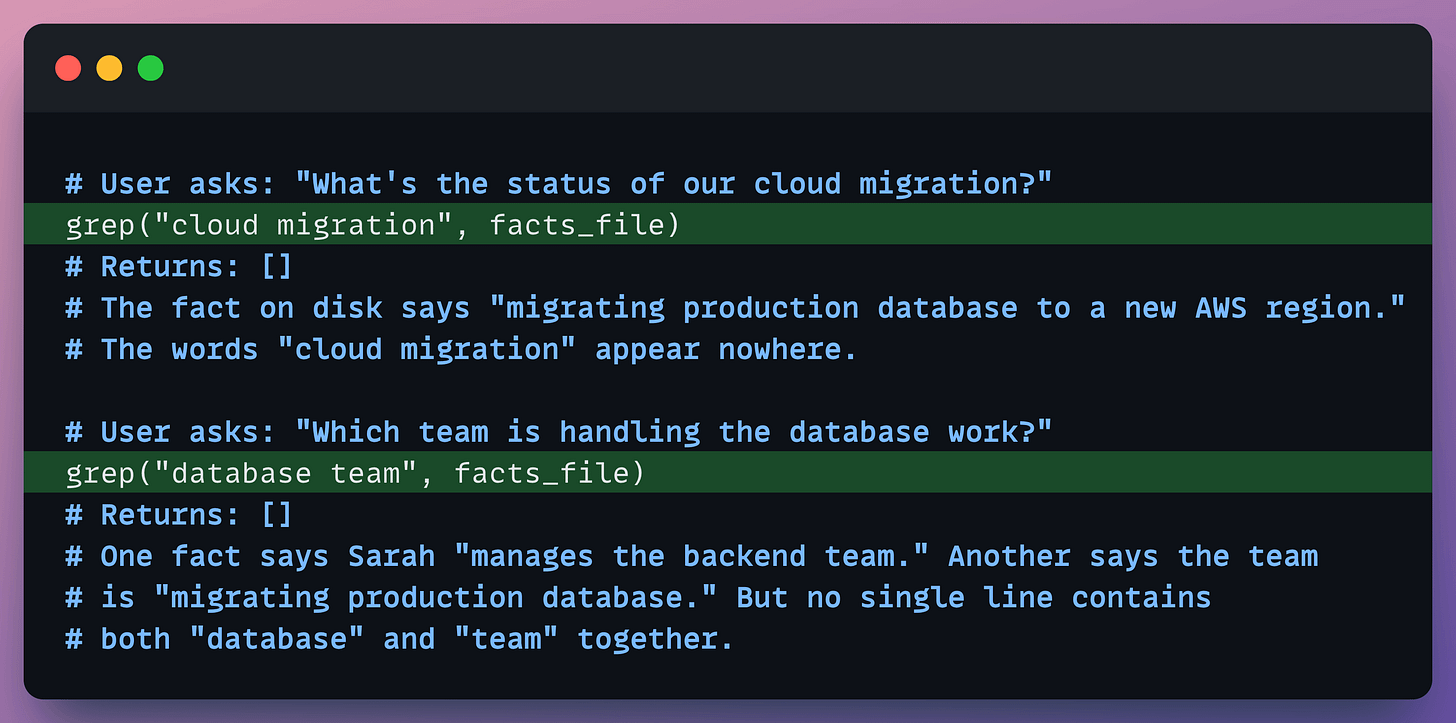

You can no longer load everything. You need to selectively retrieve only the facts relevant to the current query. With flat files, your only option is keyword search:

At small scale, markdown files work. At real scale, they force keyword retrieval, and keywords can’t handle synonyms, paraphrases, or connections across facts.

The information is on disk. But you can’t load all of it, and keyword search is too brittle to find the right pieces.

OpenClaw, for instance, stores memory as markdown checkpoint files, and over weeks of daily use, earlier facts quietly slip away as context accumulates and gets compacted. The storage is there but the retrieval isn’t.

Next step is to chunk the markdown, embed them, and search by cosine similarity, which solves the synonym problem.

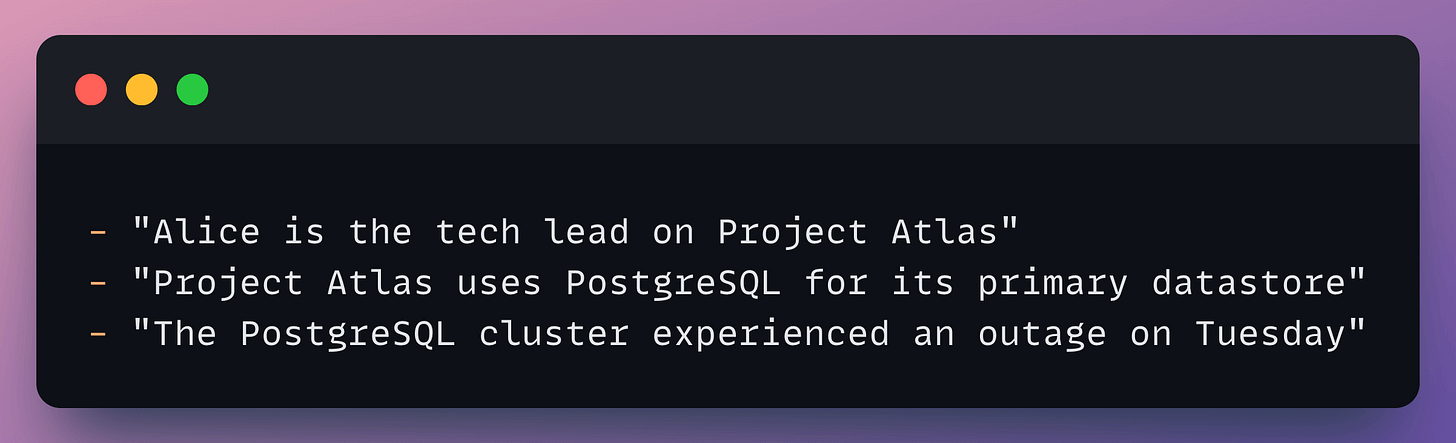

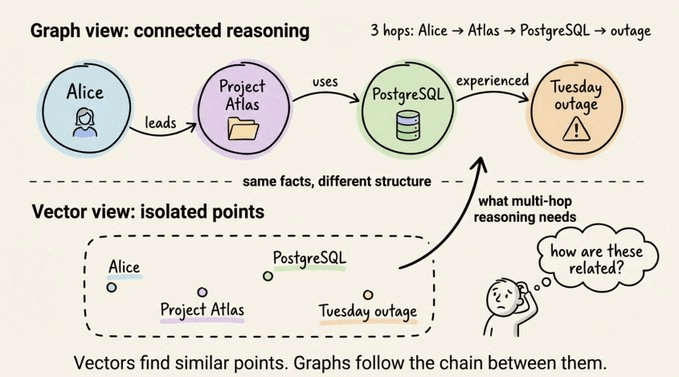

But then you face a new problem. Consider these three facts in your vector DB:

User asks: “Was Alice’s project affected by Tuesday’s outage?”

The query mentions Alice and Tuesday’s outage, so vector search ranks the first and third facts high.

But the critical bridge, “Project Atlas uses PostgreSQL,” mentions neither Alice nor Tuesday. It’s the connecting piece, and it’s the one that won’t surface.

Each fact is an isolated point in embedding space. The connective tissue linking them is invisible to vectors.

This isn’t an edge case but rather the normal shape of real-world questions.

Business knowledge is inherently relational and any question that crosses two or more hops exceeds what flat vector retrieval can answer.

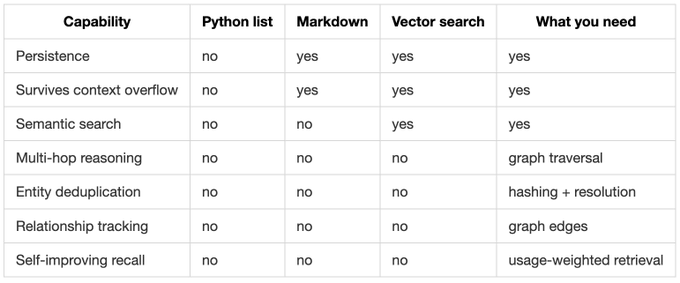

Each layer fixes the previous pain but reveals a deeper one:

You need persistence, semantic understanding, and relational reasoning in a single memory layer.

Building this yourself means gluing together a vector database, a graph database, a relational store, an entity extractor, a deduplication pipeline, and an edge-weighting system.

That’s weeks of infrastructure work before you write a single line of agent logic.

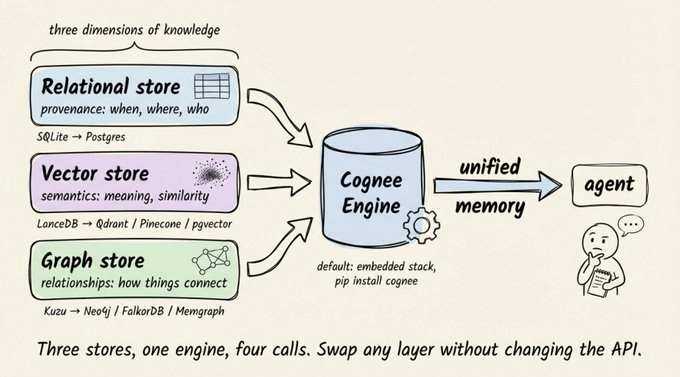

Cognee is an open-source knowledge engine built for agent memory. It combines vector search with knowledge graphs and a relational provenance layer into a single system.

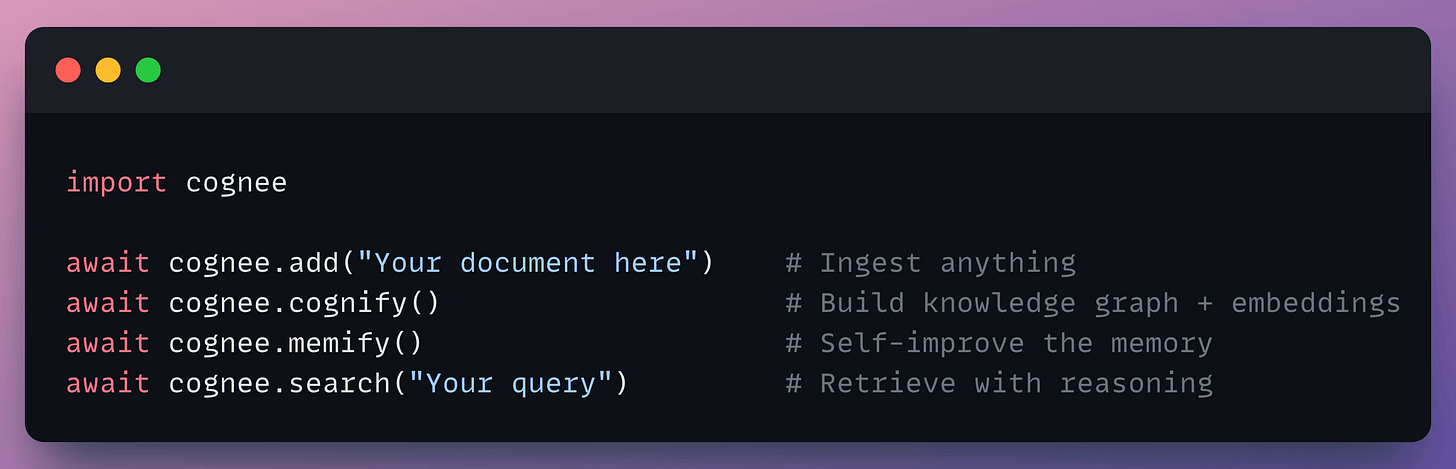

The entire API surface is four async calls:

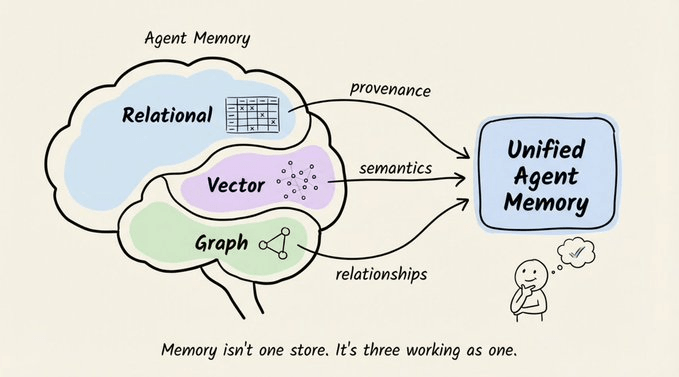

Under the hood, these four calls encapsulate a three-store architecture.

Each store captures a dimension of knowledge the others can’t:

If you flatten any of these, you’ll lose information that matters for retrieval accuracy.

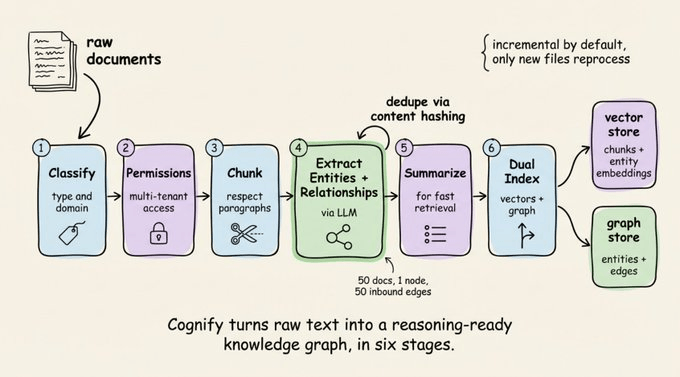

cognee.cognify() runs a multi-stage pipeline that converts raw text into structured, interconnected knowledge:

The deduplication step matters more than it sounds. If the same entity shows up across 50 documents, Cognee merges it into a single graph node with 50 inbound edges.

Your agent no longer sees “Alice” as 50 different strangers. And the pipeline is incremental by default so only new or updated files get reprocessed.

Every graph node has a corresponding embedding. This dual representation is the core trick since it allows you to enter through vectors (find semantically similar content) and exit through the graph (follow relationships to connected entities), or the reverse.

That’s what makes multi-hop queries work without sacrificing semantic search.

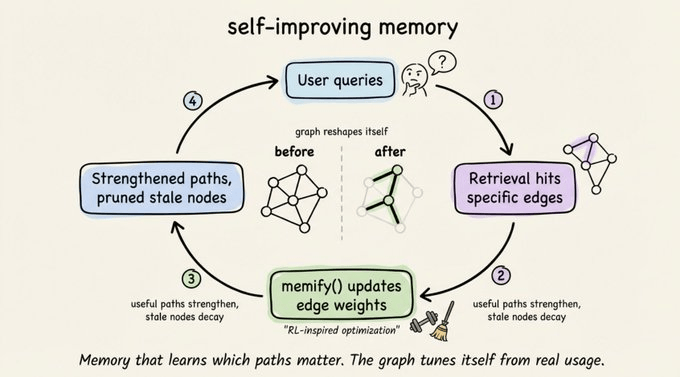

memify() is another interesting practical detail, which runs an RL-inspired optimization pass over the graph:

A customer support agent’s graph naturally strengthens paths through product docs and refund policies while letting rarely-queried HR edges decay. The graph develops its own sense of relevance over time.

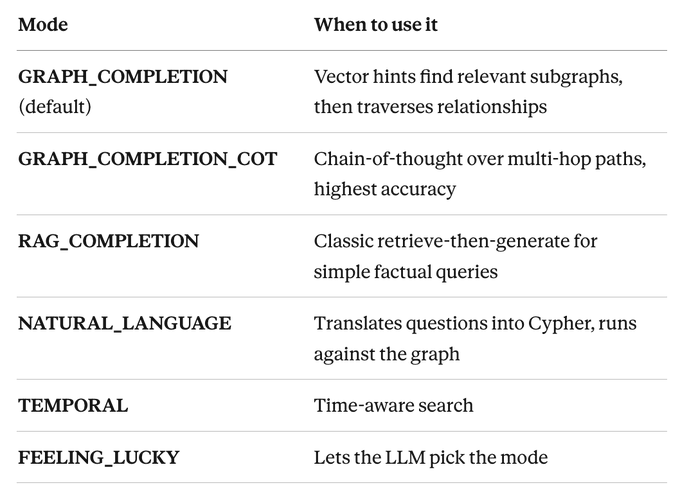

Cognee ships 14 search modes but these are the most useful ones:

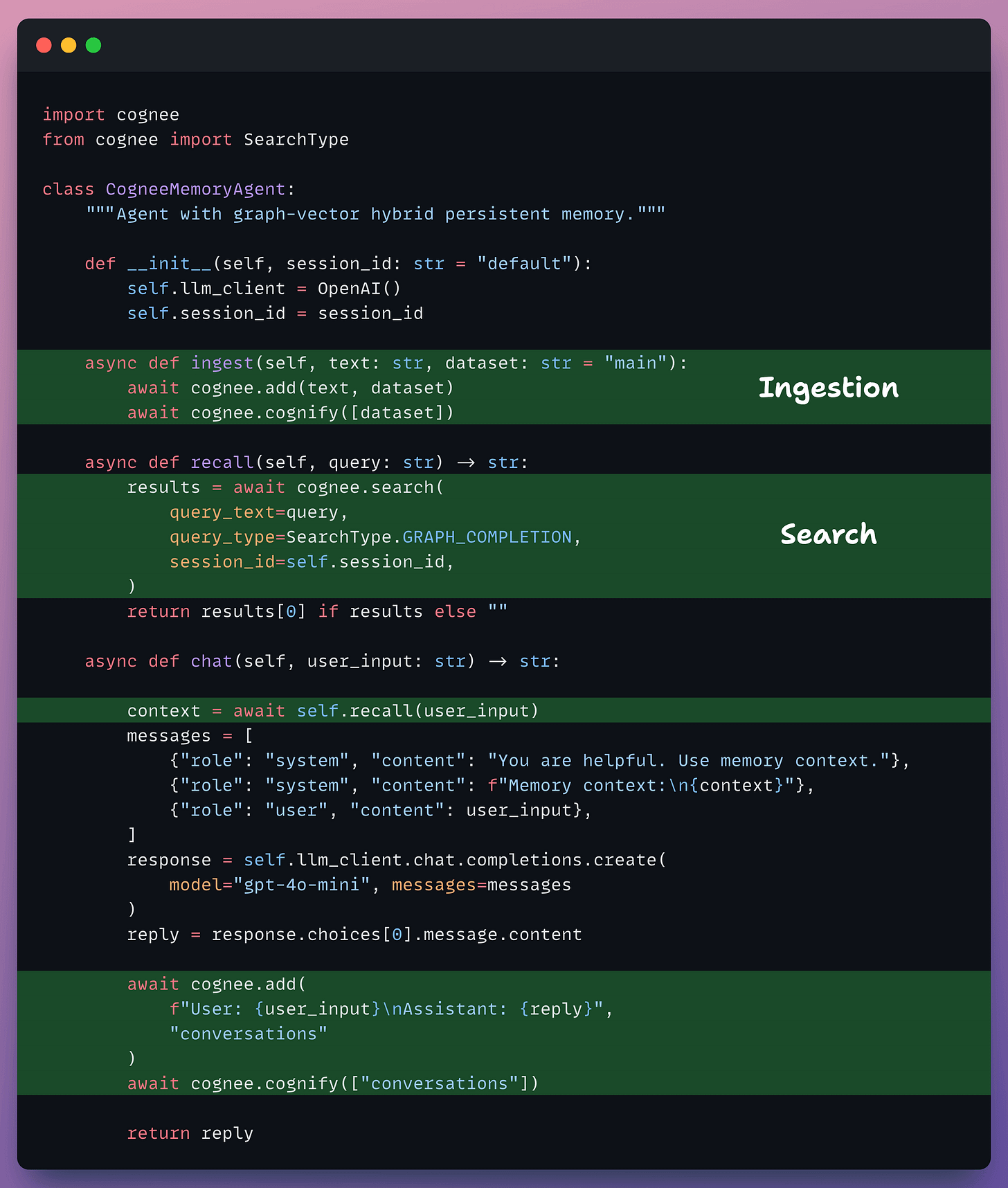

Here’s the complete pattern wiring Cognee into the perceive-think-act loop:

The memory cycle follows: ingest, extract, store, retrieve, respond, store again.

Each turn enriches the knowledge graph, and incremental processing means you only pay to index new content.

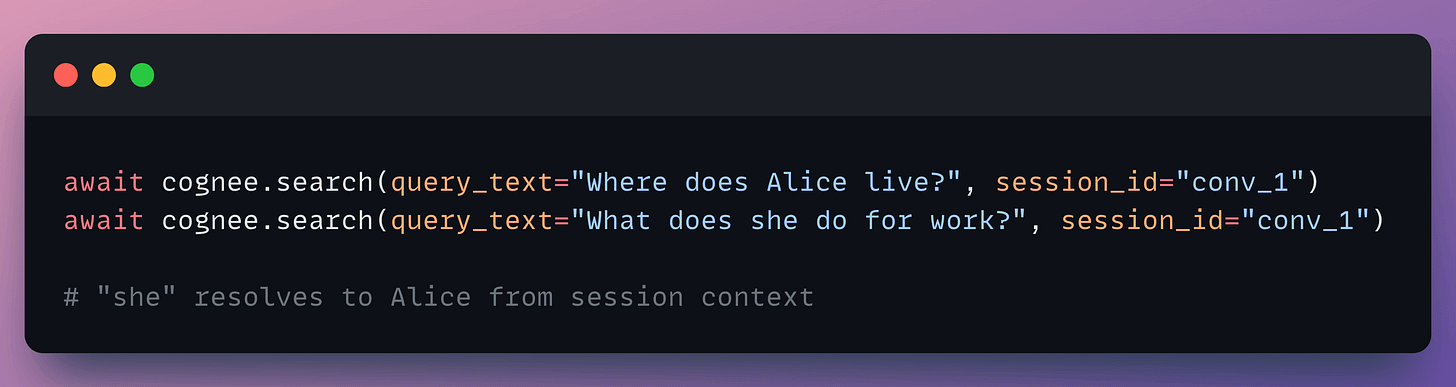

Session memory handles pronoun resolution automatically:

Multi-tenancy is built in at the graph level with per-dataset permissions (read, write, delete, share).

If you’re building an agent today, the real starting question is: “what does my agent need to remember, and what kind of questions will it answer?”

If your queries only need similarity search (”find conversations like this one”), vector-only memory works.

The moment queries cross entity boundaries (”Was Alice’s project affected by Tuesday’s outage?”), you need graph traversal.

You can wire together separate vector, graph, and relational stores yourself. Teams that go this route typically burn weeks on infrastructure for a memory layer that still doesn’t learn from its own usage.

Cognee collapses that into four API calls. Embedded defaults get you running in minutes. Swappable backends (Postgres, Qdrant, Neo4j) take you to production without changing your agent code.

Intelligence requires structure, not just storage. The three storage paradigms (relational, vector, graph) aren’t competing options. They’re complementary layers of the same memory system.

Thanks for reading!